Fast and Scalable Distributed Deep Convolutional Autoencoder for fMRI Big Data Analytics

Paper and Code

Mar 04, 2018

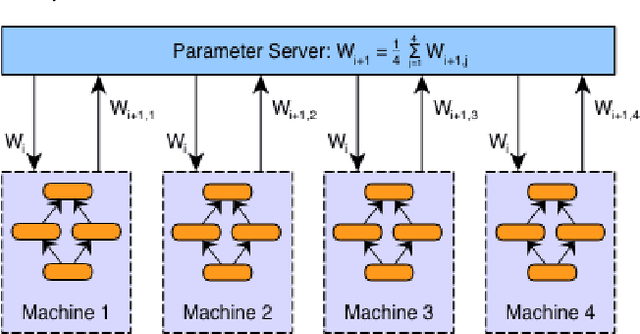

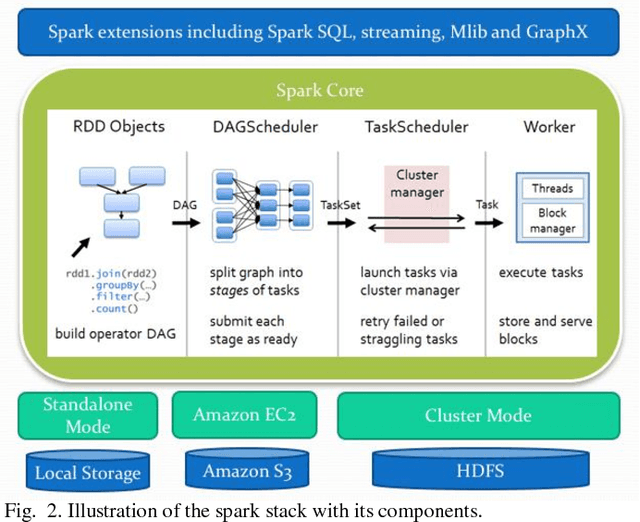

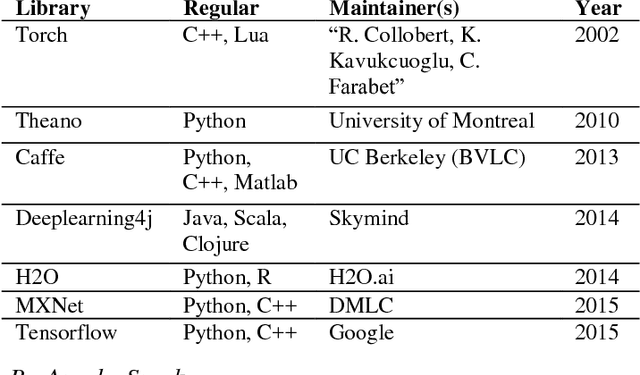

In recent years, analyzing task-based fMRI (tfMRI) data has become an essential tool for understanding brain function and networks. However, due to the sheer size of tfMRI data, its intrinsic complex structure, and lack of ground truth of underlying neural activities, modeling tfMRI data is hard and challenging. Previously proposed data-modeling methods including Independent Component Analysis (ICA) and Sparse Dictionary Learning only provided a weakly established model based on blind source separation under the strong assumption that original fMRI signals could be linearly decomposed into time series components with corresponding spatial maps. Meanwhile, analyzing and learning a large amount of tfMRI data from a variety of subjects has been shown to be very demanding but yet challenging even with technological advances in computational hardware. Given the Convolutional Neural Network (CNN), a robust method for learning high-level abstractions from low-level data such as tfMRI time series, in this work we propose a fast and scalable novel framework for distributed deep Convolutional Autoencoder model. This model aims to both learn the complex hierarchical structure of the tfMRI data and to leverage the processing power of multiple GPUs in a distributed fashion. To implement such a model, we have created an enhanced processing pipeline on the top of Apache Spark and Tensorflow library, leveraging from a very large cluster of GPU machines. Experimental data from applying the model on the Human Connectome Project (HCP) show that the proposed model is efficient and scalable toward tfMRI big data analytics, thus enabling data-driven extraction of hierarchical neuroscientific information from massive fMRI big data in the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge