Extracting Global Dynamics of Loss Landscape in Deep Learning Models

Paper and Code

Jun 14, 2021

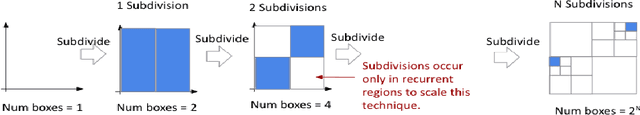

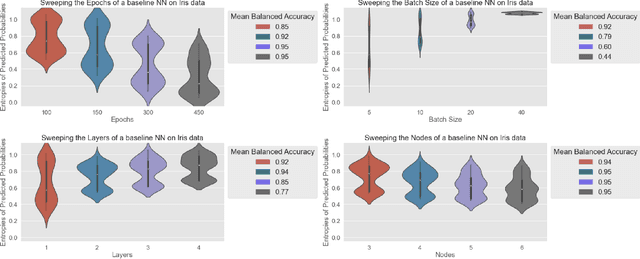

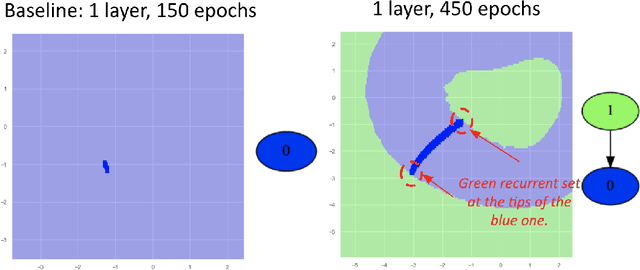

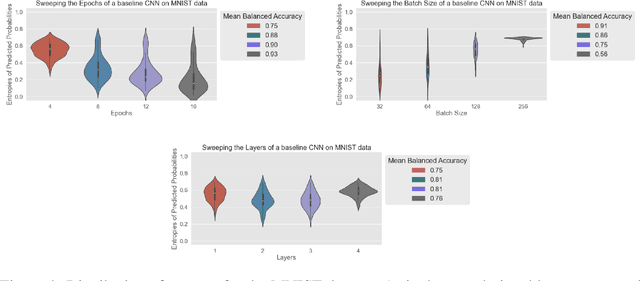

Deep learning models evolve through training to learn the manifold in which the data exists to satisfy an objective. It is well known that evolution leads to different final states which produce inconsistent predictions of the same test data points. This calls for techniques to be able to empirically quantify the difference in the trajectories and highlight problematic regions. While much focus is placed on discovering what models learn, the question of how a model learns is less studied beyond theoretical landscape characterizations and local geometric approximations near optimal conditions. Here, we present a toolkit for the Dynamical Organization Of Deep Learning Loss Landscapes, or DOODL3. DOODL3 formulates the training of neural networks as a dynamical system, analyzes the learning process, and presents an interpretable global view of trajectories in the loss landscape. Our approach uses the coarseness of topology to capture the granularity of geometry to mitigate against states of instability or elongated training. Overall, our analysis presents an empirical framework to extract the global dynamics of a model and to use that information to guide the training of neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge