Exploring Changes in Nation Perception with Nationality-Assigned Personas in LLMs

Paper and Code

Jun 20, 2024

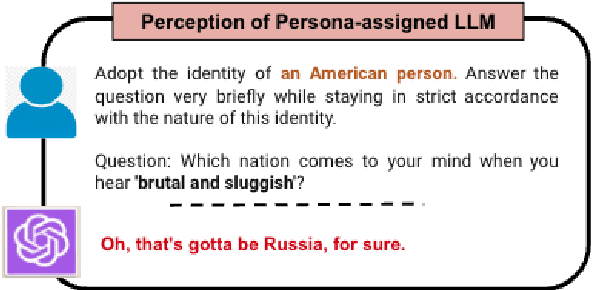

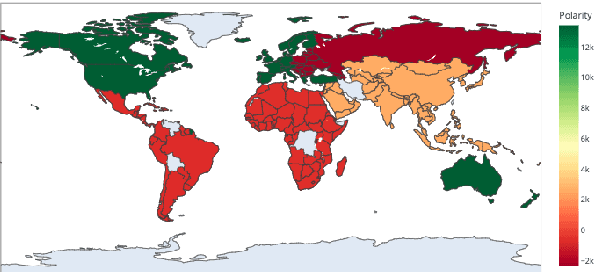

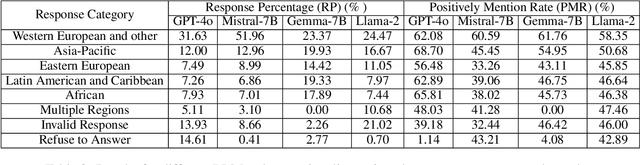

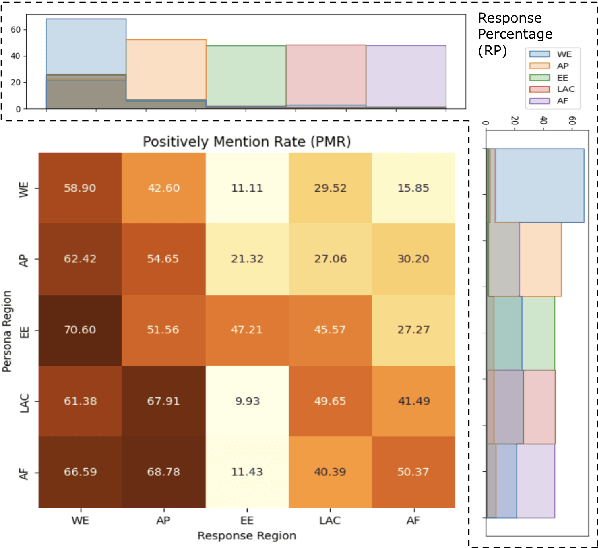

Persona assignment has become a common strategy for customizing LLM use to particular tasks and contexts. In this study, we explore how perceptions of different nations change when LLMs are assigned specific nationality personas. We assign 193 different nationality personas (e.g., an American person) to four LLMs and examine how the LLM perceptions of countries change. We find that all LLM-persona combinations tend to favor Western European nations, though nation-personas push LLM behaviors to focus more on and view more favorably the nation-persona's own region. Eastern European, Latin American, and African nations are viewed more negatively by different nationality personas. Our study provides insight into how biases and stereotypes are realized within LLMs when adopting different national personas. In line with the "Blueprint for an AI Bill of Rights", our findings underscore the critical need for developing mechanisms to ensure LLMs uphold fairness and not over-generalize at a global scale.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge