Exploiting Numerical Sparsity for Efficient Learning : Faster Eigenvector Computation and Regression

Paper and Code

Nov 27, 2018

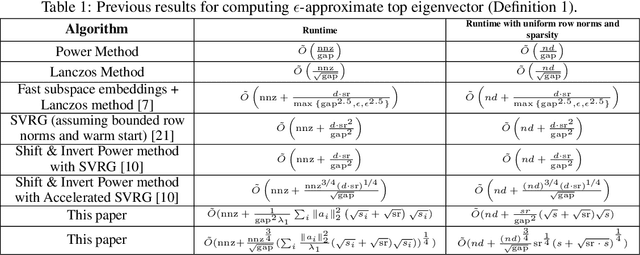

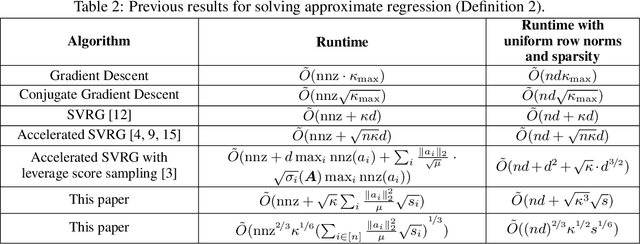

In this paper, we obtain improved running times for regression and top eigenvector computation for numerically sparse matrices. Given a data matrix $A \in \mathbb{R}^{n \times d}$ where every row $a \in \mathbb{R}^d$ has $\|a\|_2^2 \leq L$ and numerical sparsity at most $s$, i.e. $\|a\|_1^2 / \|a\|_2^2 \leq s$, we provide faster algorithms for these problems in many parameter settings. For top eigenvector computation, we obtain a running time of $\tilde{O}(nd + r(s + \sqrt{r s}) / \mathrm{gap}^2)$ where $\mathrm{gap} > 0$ is the relative gap between the top two eigenvectors of $A^\top A$ and $r$ is the stable rank of $A$. This running time improves upon the previous best unaccelerated running time of $O(nd + r d / \mathrm{gap}^2)$ as it is always the case that $r \leq d$ and $s \leq d$. For regression, we obtain a running time of $\tilde{O}(nd + (nL / \mu) \sqrt{s nL / \mu})$ where $\mu > 0$ is the smallest eigenvalue of $A^\top A$. This running time improves upon the previous best unaccelerated running time of $\tilde{O}(nd + n L d / \mu)$. This result expands the regimes where regression can be solved in nearly linear time from when $L/\mu = \tilde{O}(1)$ to when $L / \mu = \tilde{O}(d^{2/3} / (sn)^{1/3})$. Furthermore, we obtain similar improvements even when row norms and numerical sparsities are non-uniform and we show how to achieve even faster running times by accelerating using approximate proximal point [Frostig et. al. 2015] / catalyst [Lin et. al. 2015]. Our running times depend only on the size of the input and natural numerical measures of the matrix, i.e. eigenvalues and $\ell_p$ norms, making progress on a key open problem regarding optimal running times for efficient large-scale learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge