Explanatory models in neuroscience: Part 1 -- taking mechanistic abstraction seriously

Paper and Code

Apr 10, 2021

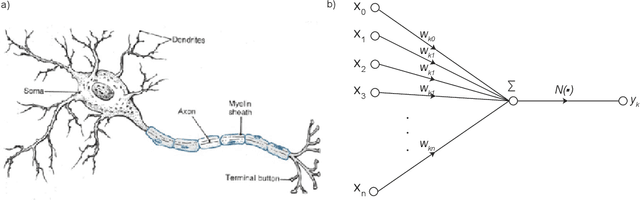

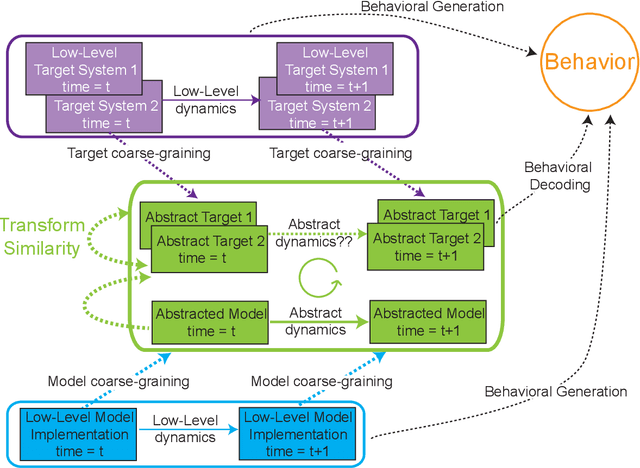

Despite the recent success of neural network models in mimicking animal performance on visual perceptual tasks, critics worry that these models fail to illuminate brain function. We take it that a central approach to explanation in systems neuroscience is that of mechanistic modeling, where understanding the system is taken to require fleshing out the parts, organization, and activities of a system, and how those give rise to behaviors of interest. However, it remains somewhat controversial what it means for a model to describe a mechanism, and whether neural network models qualify as explanatory. We argue that certain kinds of neural network models are actually good examples of mechanistic models, when the right notion of mechanistic mapping is deployed. Building on existing work on model-to-mechanism mapping (3M), we describe criteria delineating such a notion, which we call 3M++. These criteria require us, first, to identify a level of description that is both abstract but detailed enough to be "runnable", and then, to construct model-to-brain mappings using the same principles as those employed for brain-to-brain mapping across individuals. Perhaps surprisingly, the abstractions required are those already in use in experimental neuroscience, and are of the kind deployed in the construction of more familiar computational models, just as the principles of inter-brain mappings are very much in the spirit of those already employed in the collection and analysis of data across animals. In a companion paper, we address the relationship between optimization and intelligibility, in the context of functional evolutionary explanations. Taken together, mechanistic interpretations of computational models and the dependencies between form and function illuminated by optimization processes can help us to understand why brain systems are built they way they are.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge