Explaining the Unexplained: Revealing Hidden Correlations for Better Interpretability

Paper and Code

Dec 02, 2024

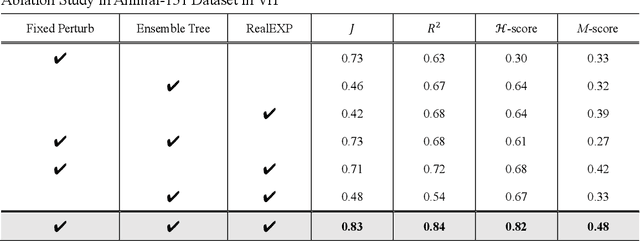

Deep learning has achieved remarkable success in processing and managing unstructured data. However, its "black box" nature imposes significant limitations, particularly in sensitive application domains. While existing interpretable machine learning methods address some of these issues, they often fail to adequately consider feature correlations and provide insufficient evaluation of model decision paths. To overcome these challenges, this paper introduces Real Explainer (RealExp), an interpretability computation method that decouples the Shapley Value into individual feature importance and feature correlation importance. By incorporating feature similarity computations, RealExp enhances interpretability by precisely quantifying both individual feature contributions and their interactions, leading to more reliable and nuanced explanations. Additionally, this paper proposes a novel interpretability evaluation criterion focused on elucidating the decision paths of deep learning models, going beyond traditional accuracy-based metrics. Experimental validations on two unstructured data tasks -- image classification and text sentiment analysis -- demonstrate that RealExp significantly outperforms existing methods in interpretability. Case studies further illustrate its practical value: in image classification, RealExp aids in selecting suitable pre-trained models for specific tasks from an interpretability perspective; in text classification, it enables the optimization of models and approximates the performance of a fine-tuned GPT-Ada model using traditional bag-of-words approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge