Explainable Artificial Intelligence for Increasing User Trust in Deep Reinforcement Learning Driven Autonomous Systems

Paper and Code

Jun 07, 2021

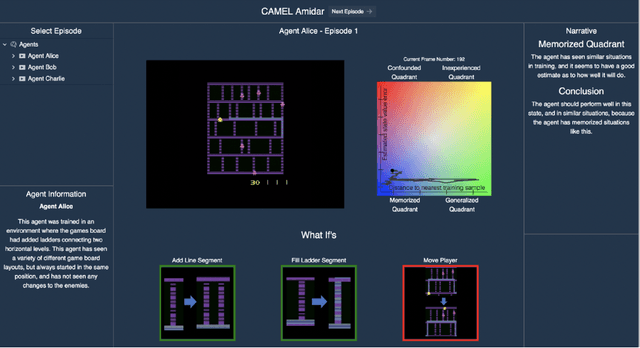

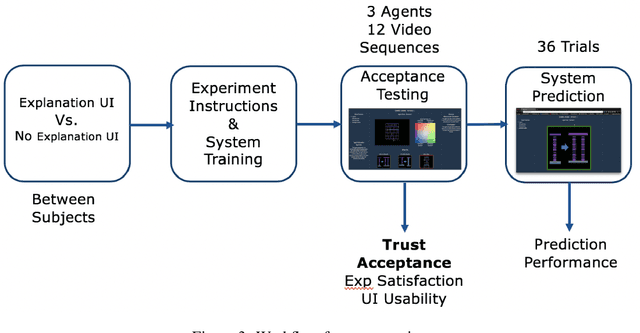

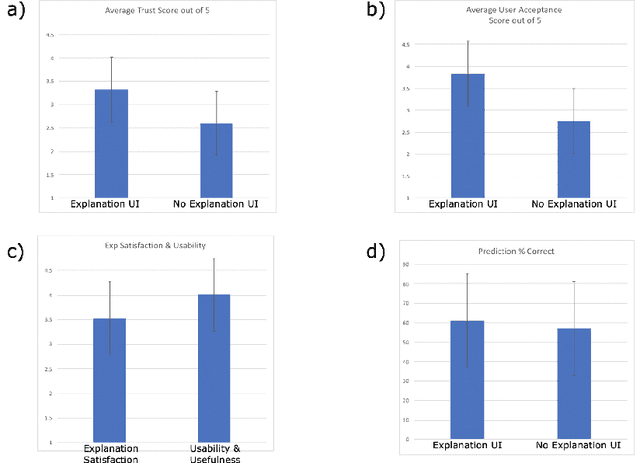

We consider the problem of providing users of deep Reinforcement Learning (RL) based systems with a better understanding of when their output can be trusted. We offer an explainable artificial intelligence (XAI) framework that provides a three-fold explanation: a graphical depiction of the systems generalization and performance in the current game state, how well the agent would play in semantically similar environments, and a narrative explanation of what the graphical information implies. We created a user-interface for our XAI framework and evaluated its efficacy via a human-user experiment. The results demonstrate a statistically significant increase in user trust and acceptance of the AI system with explanation, versus the AI system without explanation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge