Explainability in reinforcement learning: perspective and position

Paper and Code

Mar 22, 2022

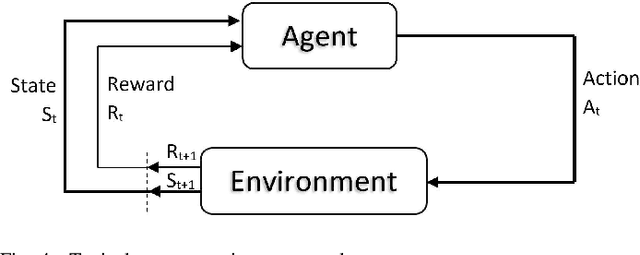

Artificial intelligence (AI) has been embedded into many aspects of people's daily lives and it has become normal for people to have AI make decisions for them. Reinforcement learning (RL) models increase the space of solvable problems with respect to other machine learning paradigms. Some of the most interesting applications are in situations with non-differentiable expected reward function, operating in unknown or underdefined environment, as well as for algorithmic discovery that surpasses performance of any teacher, whereby agent learns from experimental experience through simple feedback. The range of applications and their social impact is vast, just to name a few: genomics, game-playing (chess, Go, etc.), general optimization, financial investment, governmental policies, self-driving cars, recommendation systems, etc. It is therefore essential to improve the trust and transparency of RL-based systems through explanations. Most articles dealing with explainability in artificial intelligence provide methods that concern supervised learning and there are very few articles dealing with this in the area of RL. The reasons for this are the credit assignment problem, delayed rewards, and the inability to assume that data is independently and identically distributed (i.i.d.). This position paper attempts to give a systematic overview of existing methods in the explainable RL area and propose a novel unified taxonomy, building and expanding on the existing ones. The position section describes pragmatic aspects of how explainability can be observed. The gap between the parties receiving and generating the explanation is especially emphasized. To reduce the gap and achieve honesty and truthfulness of explanations, we set up three pillars: proactivity, risk attitudes, and epistemological constraints. To this end, we illustrate our proposal on simple variants of the shortest path problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge