Explain to me like I am five -- Sentence Simplification Using Transformers

Paper and Code

Dec 08, 2022

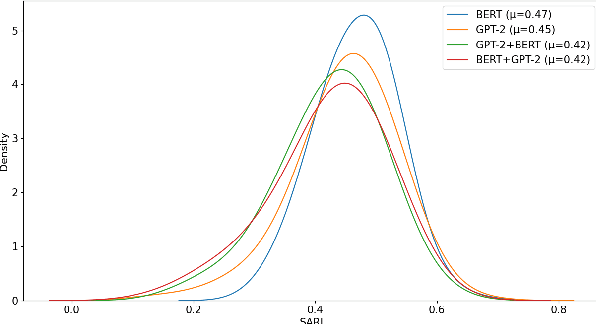

Sentence simplification aims at making the structure of text easier to read and understand while maintaining its original meaning. This can be helpful for people with disabilities, new language learners, or those with low literacy. Simplification often involves removing difficult words and rephrasing the sentence. Previous research have focused on tackling this task by either using external linguistic databases for simplification or by using control tokens for desired fine-tuning of sentences. However, in this paper we purely use pre-trained transformer models. We experiment with a combination of GPT-2 and BERT models, achieving the best SARI score of 46.80 on the Mechanical Turk dataset, which is significantly better than previous state-of-the-art results. The code can be found at https://github.com/amanbasu/sentence-simplification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge