Expectation Propagation for approximate Bayesian inference

Paper and Code

Jan 10, 2013

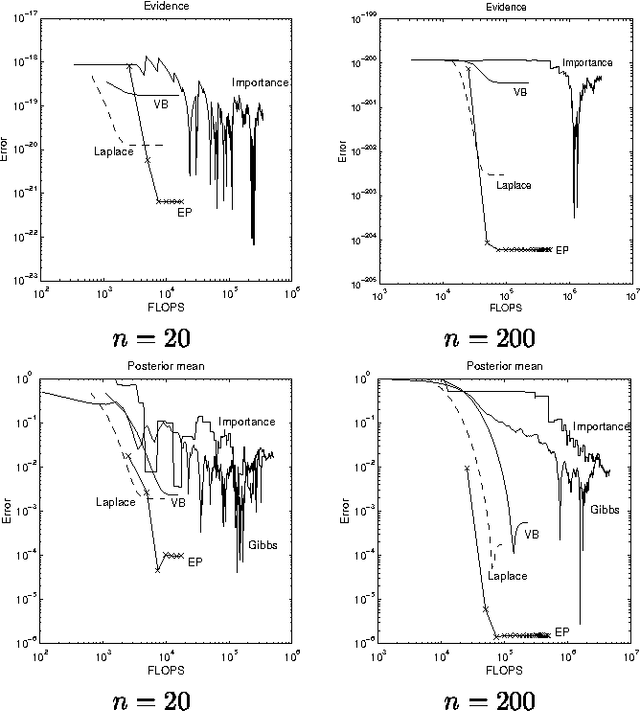

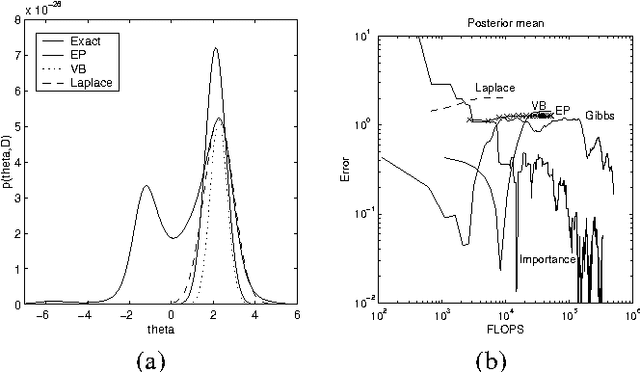

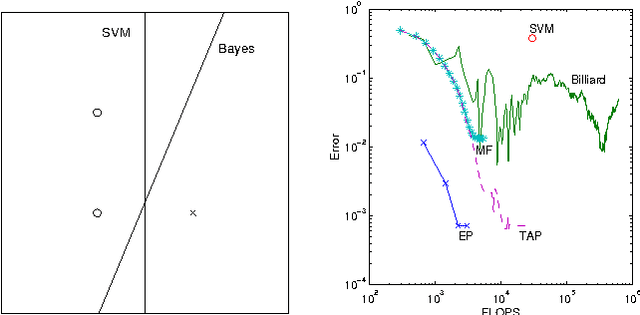

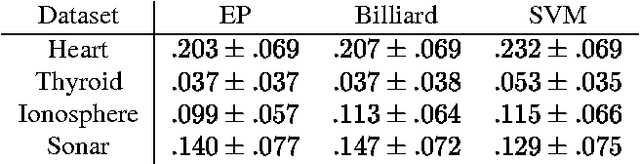

This paper presents a new deterministic approximation technique in Bayesian networks. This method, "Expectation Propagation", unifies two previous techniques: assumed-density filtering, an extension of the Kalman filter, and loopy belief propagation, an extension of belief propagation in Bayesian networks. All three algorithms try to recover an approximate distribution which is close in KL divergence to the true distribution. Loopy belief propagation, because it propagates exact belief states, is useful for a limited class of belief networks, such as those which are purely discrete. Expectation Propagation approximates the belief states by only retaining certain expectations, such as mean and variance, and iterates until these expectations are consistent throughout the network. This makes it applicable to hybrid networks with discrete and continuous nodes. Expectation Propagation also extends belief propagation in the opposite direction - it can propagate richer belief states that incorporate correlations between nodes. Experiments with Gaussian mixture models show Expectation Propagation to be convincingly better than methods with similar computational cost: Laplace's method, variational Bayes, and Monte Carlo. Expectation Propagation also provides an efficient algorithm for training Bayes point machine classifiers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge