ExpansionNet: exploring the sequence length bottleneck in the Transformer for Image Captioning

Paper and Code

Jul 07, 2022

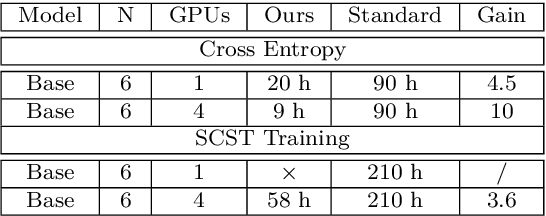

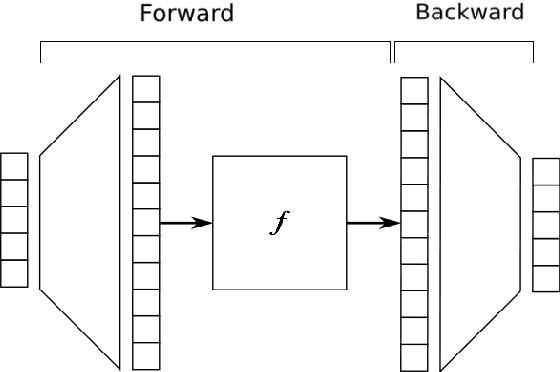

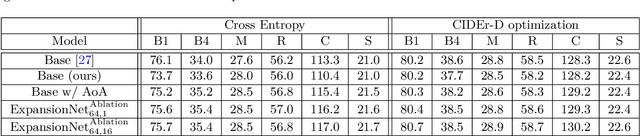

Most recent state of art architectures rely on combinations and variations of three approaches: convolutional, recurrent and self-attentive methods. Our work attempts in laying the basis for a new research direction for sequence modeling based upon the idea of modifying the sequence length. In order to do that, we propose a new method called ``Expansion Mechanism'' which transforms either dynamically or statically the input sequence into a new one featuring a different sequence length. Furthermore, we introduce a novel architecture that exploits such method and achieves competitive performances on the MS-COCO 2014 data set, yielding 134.6 and 131.4 CIDEr-D on the Karpathy test split in the ensemble and single model configuration respectively and 130 CIDEr-D in the official online testing server, despite being neither recurrent nor fully attentive. At the same time we address the efficiency aspect in our design and introduce a convenient training strategy suitable for most computational resources in contrast to the standard one. Source code is available at https://github.com/jchenghu/ExpansionNet

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge