Evaluating Team Skill Aggregation in Online Competitive Games

Paper and Code

Jun 21, 2021

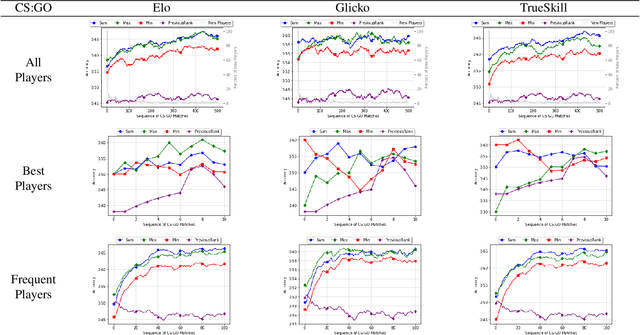

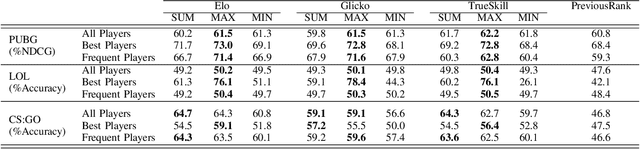

One of the main goals of online competitive games is increasing player engagement by ensuring fair matches. These games use rating systems for creating balanced match-ups. Rating systems leverage statistical estimation to rate players' skills and use skill ratings to predict rank before matching players. Skill ratings of individual players can be aggregated to compute the skill level of a team. While research often aims to improve the accuracy of skill estimation and fairness of match-ups, less attention has been given to how the skill level of a team is calculated from the skill level of its members. In this paper, we propose two new aggregation methods and compare them with a standard approach extensively used in the research literature. We present an exhaustive analysis of the impact of these methods on the predictive performance of rating systems. We perform our experiments using three popular rating systems, Elo, Glicko, and TrueSkill, on three real-world datasets including over 100,000 battle royale and head-to-head matches. Our evaluations show the superiority of the MAX method over the other two methods in the majority of the tested cases, implying that the overall performance of a team is best determined by the performance of its most skilled member. The results of this study highlight the necessity of devising more elaborated methods for calculating a team's performance -- methods covering different aspects of players' behavior such as skills, strategy, or goals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge