ET-USB: Transformer-Based Sequential Behavior Modeling for Inbound Customer Service

Paper and Code

Dec 27, 2019

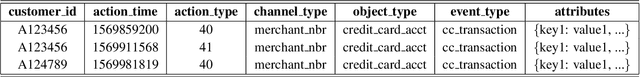

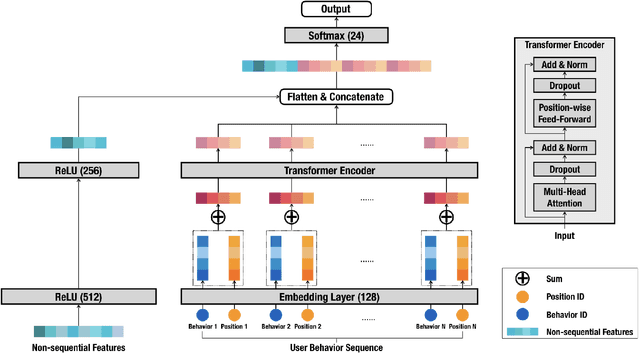

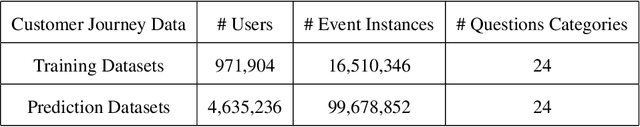

Deep learning models with attention mechanisms have achieved exceptional results for many tasks, including language tasks and recommendation systems. Whereas previous studies have emphasized allocation of phone agents, we focused on inbound call prediction for customer service. A common method of analyzing user history behaviors is to extract all types of aggregated feature over time, but that method may fail to detect users' behavioral sequences. Therefore, we created a new approach, ET-USB, that incorporates users' sequential and nonsequential features; we apply the powerful Transformer encoder, a self-attention network model, to capture the information underlying user behavior sequences. ET-USB is helpful in various business scenarios at Cathay Financial Holdings. We conducted experiments to test the proposed network structure's ability to process various dimensions of behavior data; the results suggest that ET-USB delivers results superior to those of delivered by other deep-learning models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge