EnTRPO: Trust Region Policy Optimization Method with Entropy Regularization

Paper and Code

Oct 26, 2021

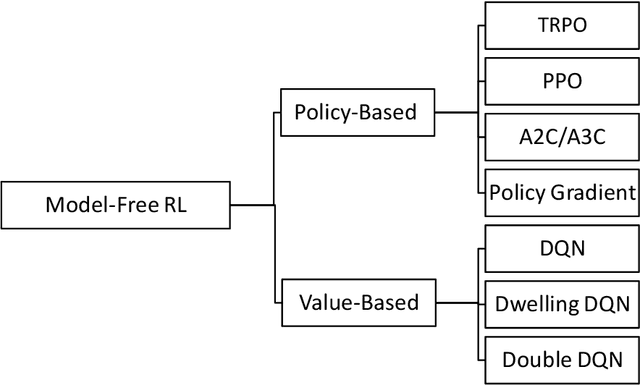

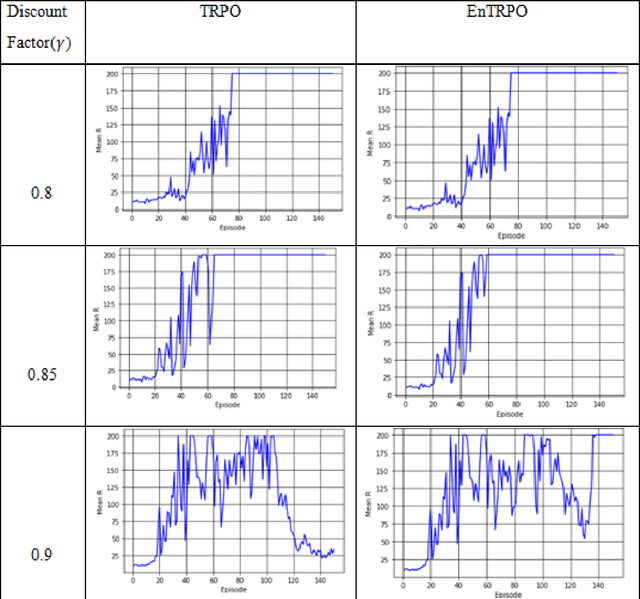

Trust Region Policy Optimization (TRPO) is a popular and empirically successful policy search algorithm in reinforcement learning (RL). It iteratively solved the surrogate problem which restricts consecutive policies to be close to each other. TRPO is an on-policy algorithm. On-policy methods bring many benefits, like the ability to gauge each resulting policy. However, they typically discard all the knowledge about the policies which existed before. In this work, we use a replay buffer to borrow from the off-policy learning setting to TRPO. Entropy regularization is usually used to improve policy optimization in reinforcement learning. It is thought to aid exploration and generalization by encouraging more random policy choices. We add an Entropy regularization term to advantage over {\pi}, accumulated over time steps, in TRPO. We call this update EnTRPO. Our experiments demonstrate EnTRPO achieves better performance for controlling a Cart-Pole system compared with the original TRPO

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge