Entropy of the Conditional Expectation under Gaussian Noise

Paper and Code

Jun 08, 2021

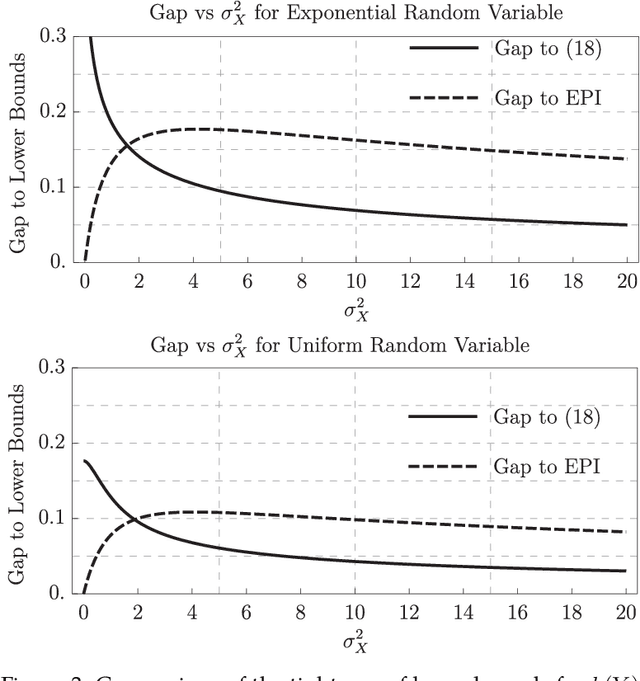

This paper considers an additive Gaussian noise channel with arbitrarily distributed finite variance input signals. It studies the differential entropy of the minimum mean-square error (MMSE) estimator and provides a new lower bound which connects the entropy of the input, output, and conditional mean. That is, the sum of entropies of the conditional mean and output is always greater than or equal to twice the input entropy. Various other properties such as upper bounds, asymptotics, Taylor series expansion, and connection to Fisher Information are obtained. An application of the lower bound in the remote-source coding problem is discussed, and extensions of the lower and upper bounds to the vector Gaussian channel are given.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge