Energy-efficient Training of Distributed DNNs in the Mobile-edge-cloud Continuum

Paper and Code

Feb 23, 2022

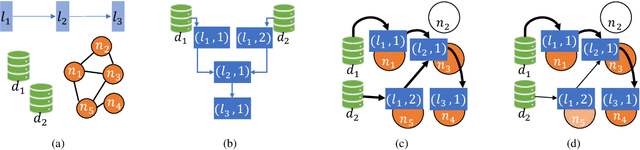

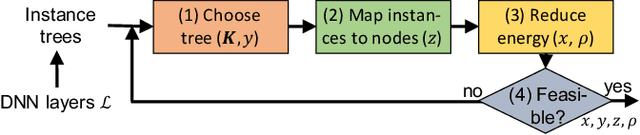

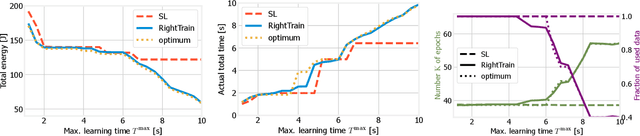

We address distributed machine learning in multi-tier (e.g., mobile-edge-cloud) networks where a heterogeneous set of nodes cooperate to perform a learning task. Due to the presence of multiple data sources and computation-capable nodes, a learning controller (e.g., located in the edge) has to make decisions about (i) which distributed ML model structure to select, (ii) which data should be used for the ML model training, and (iii) which resources should be allocated to it. Since these decisions deeply influence one another, they should be made jointly. In this paper, we envision a new approach to distributed learning in multi-tier networks, which aims at maximizing ML efficiency. To this end, we propose a solution concept, called RightTrain, that achieves energy-efficient ML model training, while fulfilling learning time and quality requirements. RightTrain makes high-quality decisions in polynomial time. Further, our performance evaluation shows that RightTrain closely matches the optimum and outperforms the state of the art by over 50%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge