End-to-End Adversarial White Box Attacks on Music Instrument Classification

Paper and Code

Jul 29, 2020

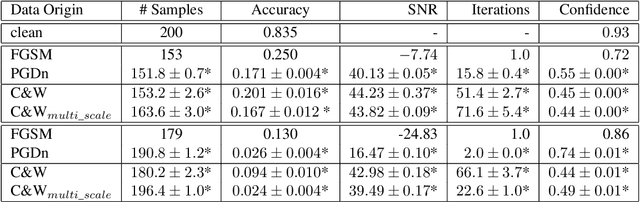

Small adversarial perturbations of input data are able to drastically change performance of machine learning systems, thereby challenging the validity of such systems. We present the very first end-to-end adversarial attacks on a music instrument classification system allowing to add perturbations directly to audio waveforms instead of spectrograms. Our attacks are able to reduce the accuracy close to a random baseline while at the same time keeping perturbations almost imperceptible and producing misclassifications to any desired instrument.

* 8 pages, 2 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge