EfficientNet-eLite: Extremely Lightweight and Efficient CNN Models for Edge Devices by Network Candidate Search

Paper and Code

Sep 16, 2020

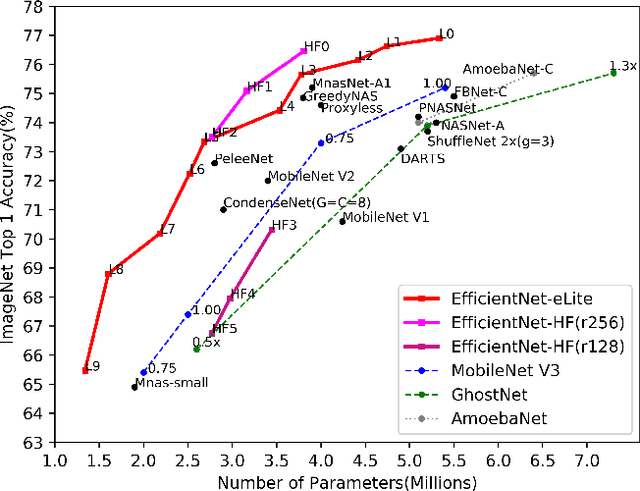

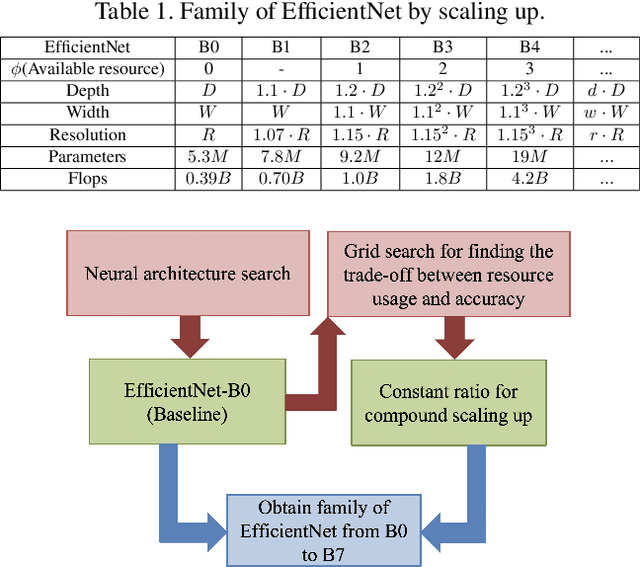

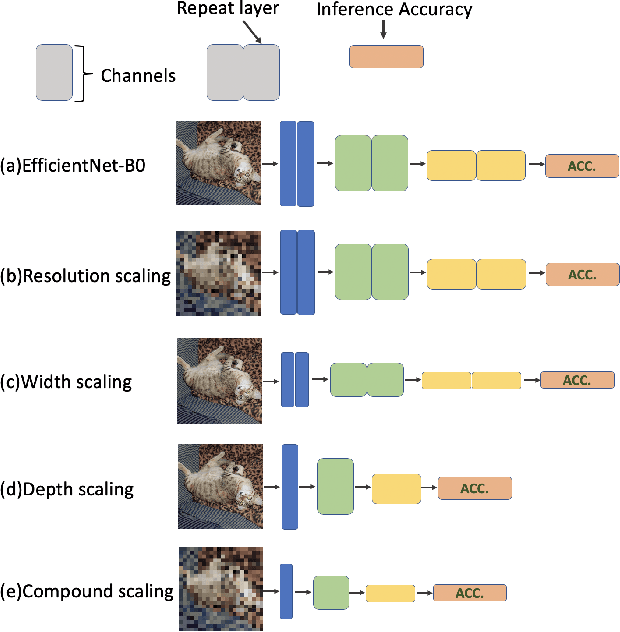

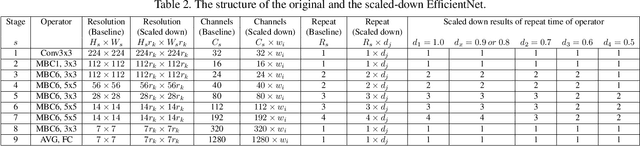

Embedding Convolutional Neural Network (CNN) into edge devices for inference is a very challenging task because such lightweight hardware is not born to handle this heavyweight software, which is the common overhead from the modern state-of-the-art CNN models. In this paper, targeting at reducing the overhead with trading the accuracy as less as possible, we propose a novel of Network Candidate Search (NCS), an alternative way to study the trade-off between the resource usage and the performance through grouping concepts and elimination tournament. Besides, NCS can also be generalized across any neural network. In our experiment, we collect candidate CNN models from EfficientNet-B0 to be scaled down in varied way through width, depth, input resolution and compound scaling down, applying NCS to research the scaling-down trade-off. Meanwhile, a family of extremely lightweight EfficientNet is obtained, called EfficientNet-eLite. For further embracing the CNN edge application with Application-Specific Integrated Circuit (ASIC), we adjust the architectures of EfficientNet-eLite to build the more hardware-friendly version, EfficientNet-HF. Evaluation on ImageNet dataset, both proposed EfficientNet-eLite and EfficientNet-HF present better parameter usage and accuracy than the previous start-of-the-art CNNs. Particularly, the smallest member of EfficientNet-eLite is more lightweight than the best and smallest existing MnasNet with 1.46x less parameters and 0.56% higher accuracy. Code is available at https://github.com/Ching-Chen-Wang/EfficientNet-eLite

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge