Efficient Black-box Assessment of Autonomous Vehicle Safety

Paper and Code

Dec 08, 2019

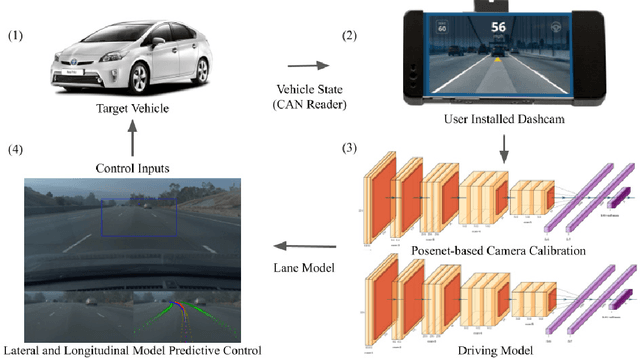

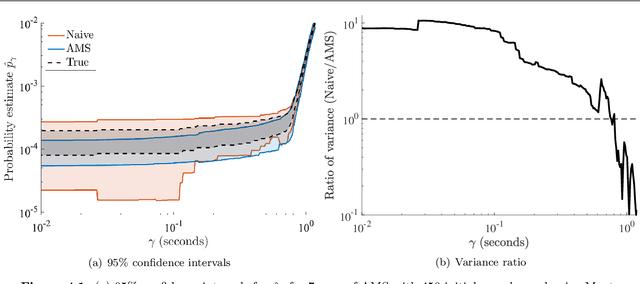

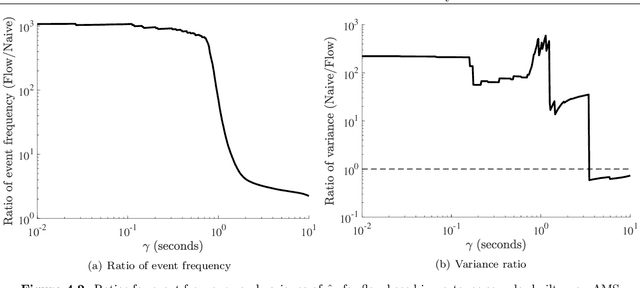

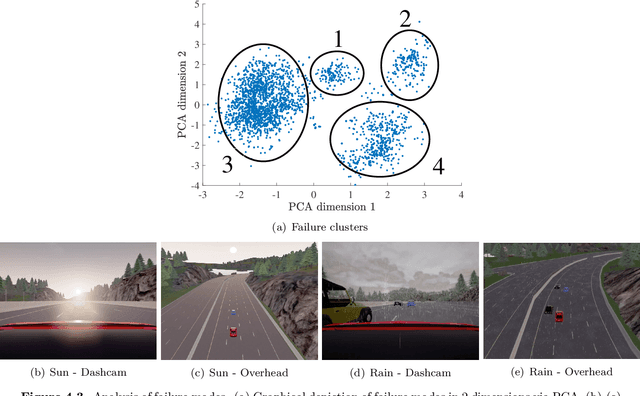

While autonomous vehicle (AV) technology has shown substantial progress, we still lack tools for rigorous and scalable testing. Real-world testing, the $\textit{de-facto}$ evaluation method, is dangerous to the public. Moreover, due to the rare nature of failures, billions of miles of driving are needed to statistically validate performance claims. Thus, the industry has largely turned to simulation to evaluate AV systems. However, having a simulation stack alone is not a solution. A simulation testing framework needs to prioritize which scenarios to run, learn how the chosen scenarios provide coverage of failure modes, and rank failure scenarios in order of importance. We implement a simulation testing framework that evaluates an entire modern AV system as a black box. This framework estimates the probability of accidents under a base distribution governing standard traffic behavior. In order to accelerate rare-event probability evaluation, we efficiently learn to identify and rank failure scenarios via adaptive importance-sampling methods. Using this framework, we conduct the first independent evaluation of a full-stack commercial AV system, Comma AI's OpenPilot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge