EEG2Mel: Reconstructing Sound from Brain Responses to Music

Paper and Code

Jul 28, 2022

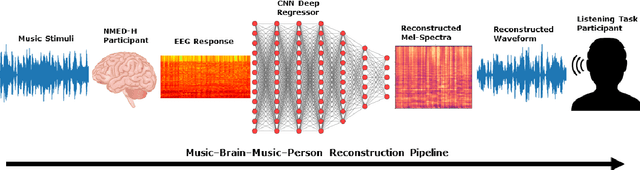

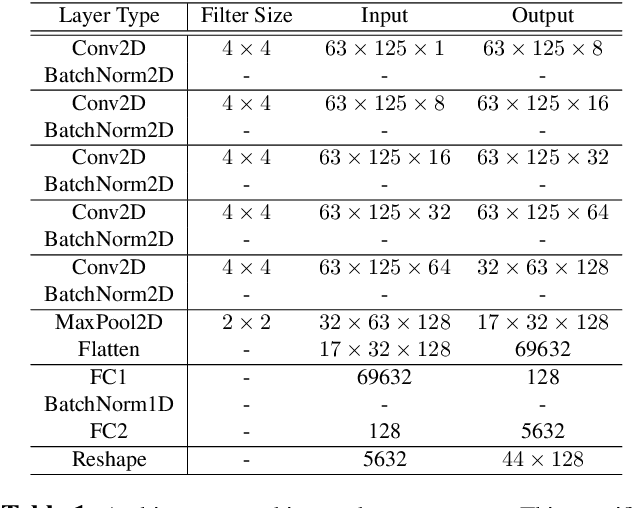

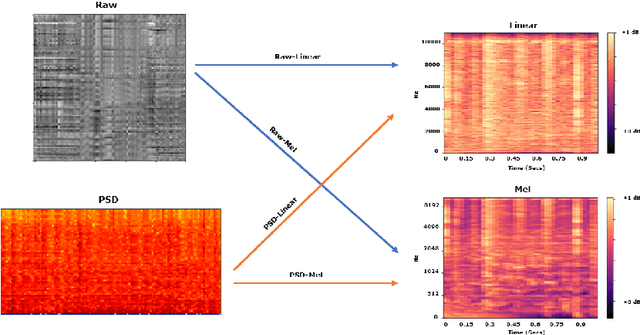

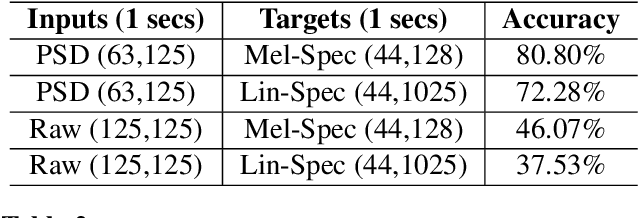

Information retrieval from brain responses to auditory and visual stimuli has shown success through classification of song names and image classes presented to participants while recording EEG signals. Information retrieval in the form of reconstructing auditory stimuli has also shown some success, but here we improve on previous methods by reconstructing music stimuli well enough to be perceived and identified independently. Furthermore, deep learning models were trained on time-aligned music stimuli spectrum for each corresponding one-second window of EEG recording, which greatly reduces feature extraction steps needed when compared to prior studies. The NMED-Tempo and NMED-Hindi datasets of participants passively listening to full length songs were used to train and validate Convolutional Neural Network (CNN) regressors. The efficacy of raw voltage versus power spectrum inputs and linear versus mel spectrogram outputs were tested, and all inputs and outputs were converted into 2D images. The quality of reconstructed spectrograms was assessed by training classifiers which showed 81% accuracy for mel-spectrograms and 72% for linear spectrograms (10% chance accuracy). Lastly, reconstructions of auditory music stimuli were discriminated by listeners at an 85% success rate (50% chance) in a two-alternative match-to-sample task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge