EEG-ConvTransformer for Single-Trial EEG based Visual Stimuli Classification

Paper and Code

Jul 08, 2021

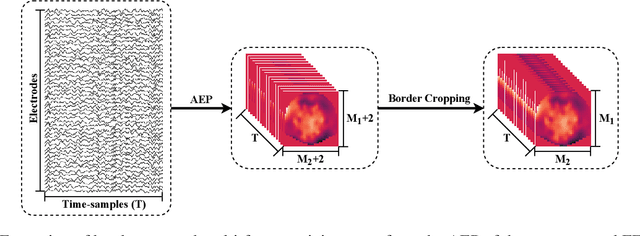

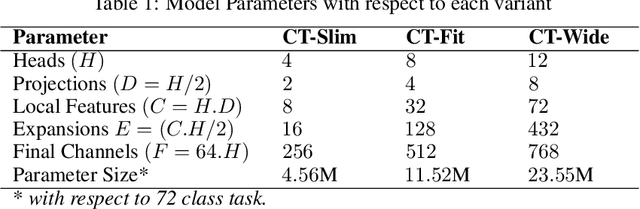

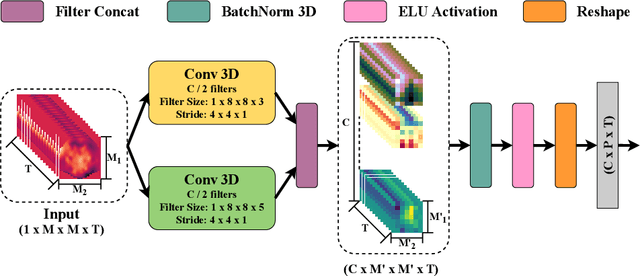

Different categories of visual stimuli activate different responses in the human brain. These signals can be captured with EEG for utilization in applications such as Brain-Computer Interface (BCI). However, accurate classification of single-trial data is challenging due to low signal-to-noise ratio of EEG. This work introduces an EEG-ConvTranformer network that is based on multi-headed self-attention. Unlike other transformers, the model incorporates self-attention to capture inter-region interactions. It further extends to adjunct convolutional filters with multi-head attention as a single module to learn temporal patterns. Experimental results demonstrate that EEG-ConvTransformer achieves improved classification accuracy over the state-of-the-art techniques across five different visual stimuli classification tasks. Finally, quantitative analysis of inter-head diversity also shows low similarity in representational subspaces, emphasizing the implicit diversity of multi-head attention.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge