DuoLift-GAN:Reconstructing CT from Single-view and Biplanar X-Rays with Generative Adversarial Networks

Paper and Code

Nov 12, 2024

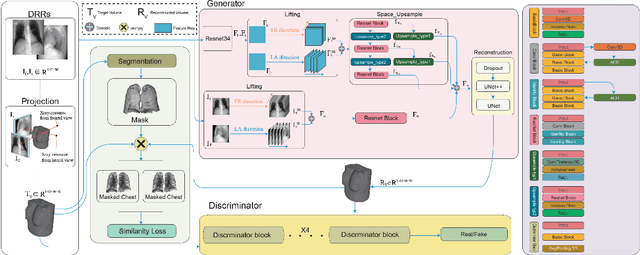

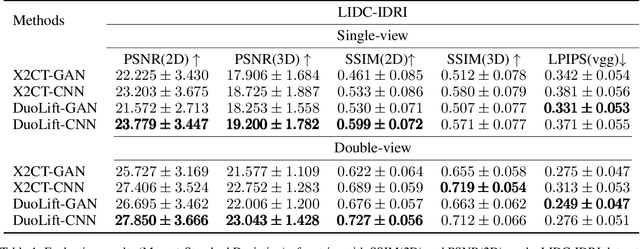

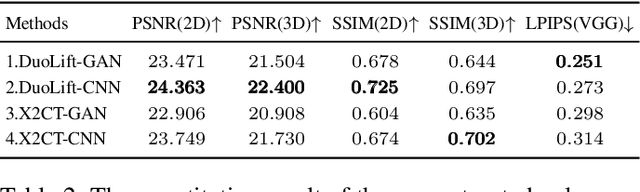

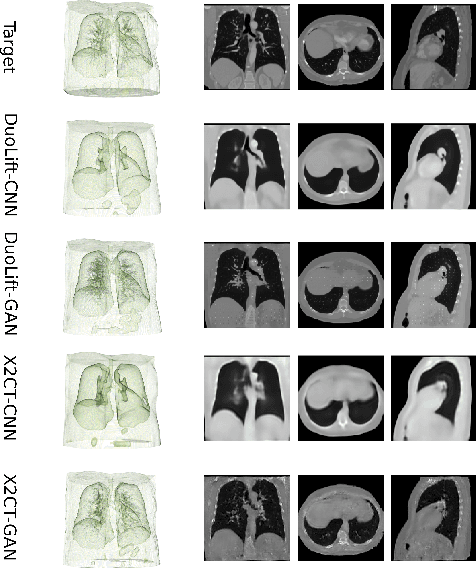

Computed tomography (CT) provides highly detailed three-dimensional (3D) medical images but is costly, time-consuming, and often inaccessible in intraoperative settings (Organization et al. 2011). Recent advancements have explored reconstructing 3D chest volumes from sparse 2D X-rays, such as single-view or orthogonal double-view images. However, current models tend to process 2D images in a planar manner, prioritizing visual realism over structural accuracy. In this work, we introduce DuoLift Generative Adversarial Networks (DuoLift-GAN), a novel architecture with dual branches that independently elevate 2D images and their features into 3D representations. These 3D outputs are merged into a unified 3D feature map and decoded into a complete 3D chest volume, enabling richer 3D information capture. We also present a masked loss function that directs reconstruction towards critical anatomical regions, improving structural accuracy and visual quality. This paper demonstrates that DuoLift-GAN significantly enhances reconstruction accuracy while achieving superior visual realism compared to existing methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge