DSPG: Decentralized Simultaneous Perturbations Gradient Descent Scheme

Paper and Code

Mar 17, 2019

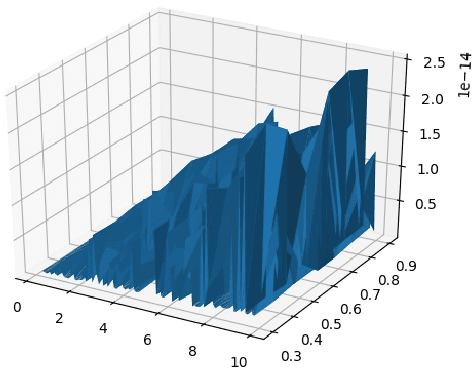

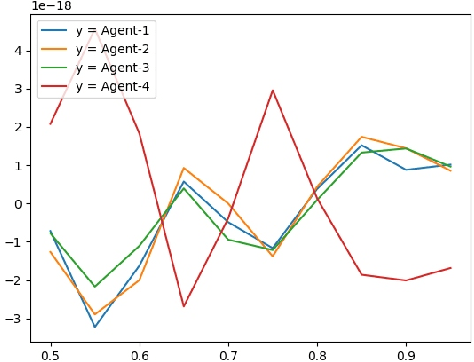

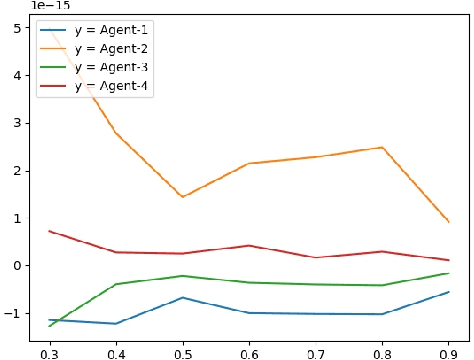

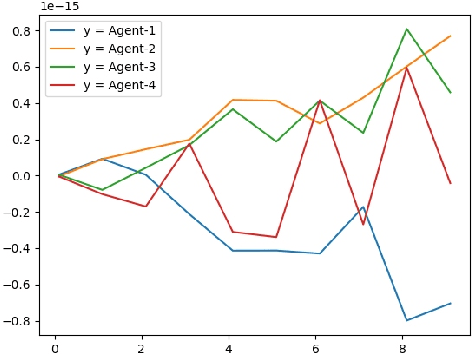

In this paper, we present an asynchronous approximate gradient method that is easy to implement called DSPG (Decentralized Simultaneous Perturbation Stochastic Approximations, with Constant Sensitivity Parameters). It is obtained by modifying SPSA (Simultaneous Perturbation Stochastic Approximations) to allow for decentralized optimization in multi-agent learning and distributed control scenarios. SPSA is a popular approximate gradient method developed by Spall, that is used in Robotics and Learning. In the multi-agent learning setup considered herein, the agents are assumed to be asynchronous (agents abide by their local clocks) and communicate via a wireless medium, that is prone to losses and delays. We analyze the gradient estimation bias that arises from setting the sensitivity parameters to a single value, and the bias that arises from communication losses and delays. Specifically, we show that these biases can be countered through better and frequent communication and/or by choosing a small fixed value for the sensitivity parameters. We also discuss the variance of the gradient estimator and its effect on the rate of convergence. Finally, we present numerical results supporting DSPG and the aforementioned theories and discussions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge