DropTrack -- automatic droplet tracking using deep learning for microfluidic applications

Paper and Code

May 05, 2022

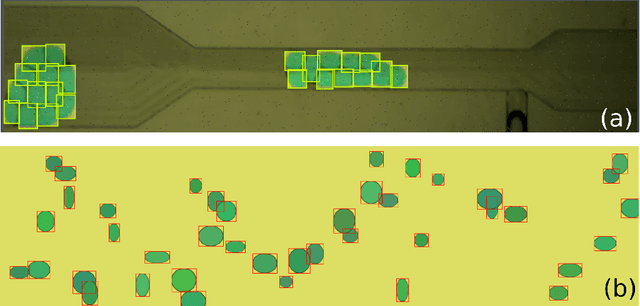

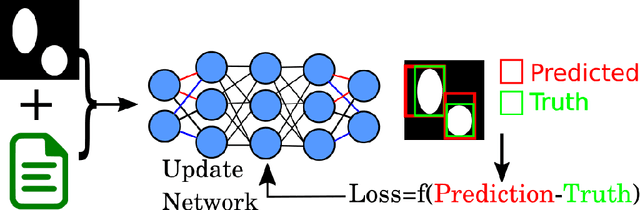

Deep neural networks are rapidly emerging as data analysis tools, often outperforming the conventional techniques used in complex microfluidic systems. One fundamental analysis frequently desired in microfluidic experiments is counting and tracking the droplets. Specifically, droplet tracking in dense emulsions is challenging as droplets move in tightly packed configurations. Sometimes the individual droplets in these dense clusters are hard to resolve, even for a human observer. Here, two deep learning-based cutting-edge algorithms for object detection (YOLO) and object tracking (DeepSORT) are combined into a single image analysis tool, DropTrack, to track droplets in microfluidic experiments. DropTrack analyzes input videos, extracts droplets' trajectories, and infers other observables of interest, such as droplet numbers. Training an object detector network for droplet recognition with manually annotated images is a labor-intensive task and a persistent bottleneck. This work partly resolves this problem by training object detector networks (YOLOv5) with hybrid datasets containing real and synthetic images. We present an analysis of a double emulsion experiment as a case study to measure DropTrack's performance. For our test case, the YOLO networks trained with 60% synthetic images show similar performance in droplet counting as with the one trained using 100% real images, meanwhile saving the image annotation work by 60%. DropTrack's performance is measured in terms of mean average precision (mAP), mean square error in counting the droplets, and inference speed. The fastest configuration of DropTrack runs inference at about 30 frames per second, well within the standards for real-time image analysis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge