Dropout against Deep Leakage from Gradients

Paper and Code

Aug 25, 2021

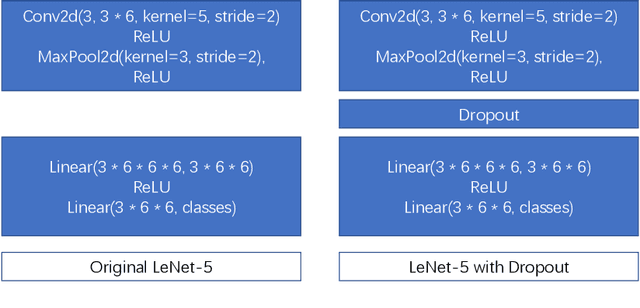

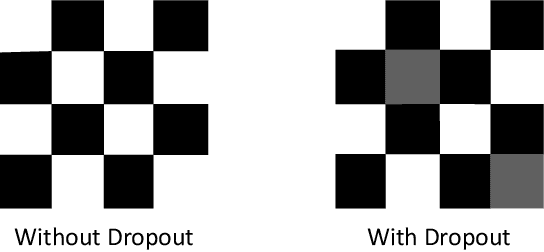

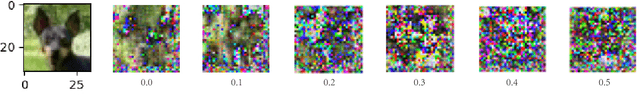

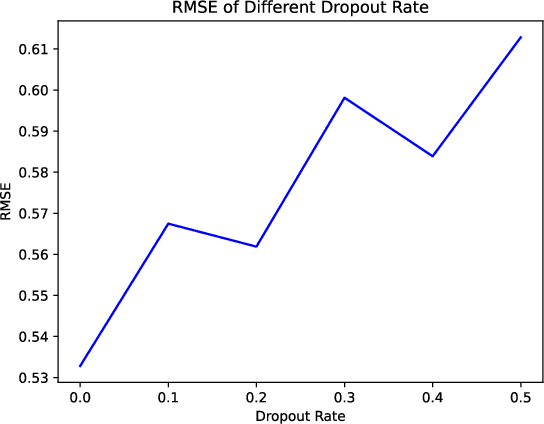

As the scale and size of the data increases significantly nowadays, federal learning (Bonawitz et al. [2019]) for high performance computing and machine learning has been much more important than ever beforeAbadi et al. [2016]. People used to believe that sharing gradients seems to be safe to conceal the local training data during the training stage. However, Zhu et al. [2019] demonstrated that it was possible to recover raw data from the model training data by detecting gradients. They use generated random dummy data and minimise the distance between them and real data. Zhao et al. [2020] pushes the convergence algorithm even further. By replacing the original loss function with cross entropy loss, they achieve better fidelity threshold. In this paper, we propose using an additional dropout (Srivastava et al. [2014]) layer before feeding the data to the classifier. It is very effective in preventing leakage of raw data, as the training data cannot converge to a small RMSE even after 5,800 epochs with dropout rate set to 0.5.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge