Distilling Pixel-Wise Feature Similarities for Semantic Segmentation

Paper and Code

Oct 31, 2019

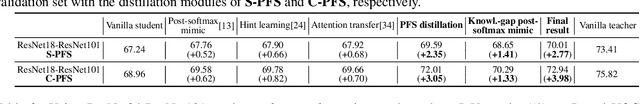

Among the neural network compression techniques, knowledge distillation is an effective one which forces a simpler student network to mimic the output of a larger teacher network. However, most of such model distillation methods focus on the image-level classification task. Directly adapting these methods to the task of semantic segmentation only brings marginal improvements. In this paper, we propose a simple, yet effective knowledge representation referred to as pixel-wise feature similarities (PFS) to tackle the challenging distillation problem of semantic segmentation. The developed PFS encodes spatial structural information for each pixel location of the high-level convolutional features, which helps guide the distillation process in an easier way. Furthermore, a novel weighted pixel-level soft prediction imitation approach is proposed to enable the student network to selectively mimic the teacher network's output, according to their pixel-wise knowledge-gaps. Extensive experiments are conducted on the challenging datasets of Pascal VOC 2012, ADE20K and Pascal Context. Our approach brings significant performance improvements compared to several strong baselines and achieves new state-of-the-art results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge