Diffusion for Multi-Embodiment Grasping

Paper and Code

Oct 24, 2024

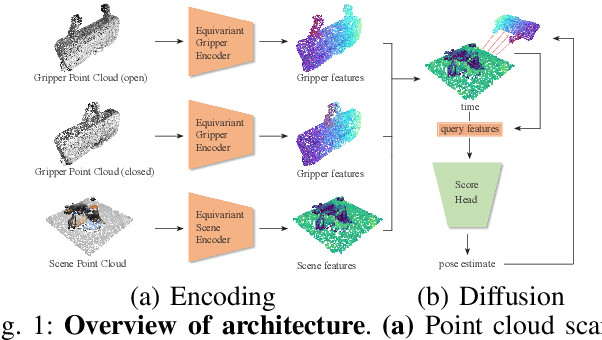

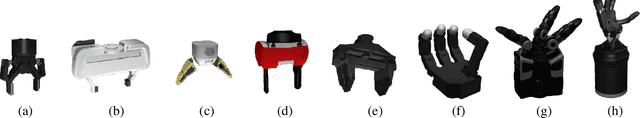

Grasping is a fundamental skill in robotics with diverse applications across medical, industrial, and domestic domains. However, current approaches for predicting valid grasps are often tailored to specific grippers, limiting their applicability when gripper designs change. To address this limitation, we explore the transfer of grasping strategies between various gripper designs, enabling the use of data from diverse sources. In this work, we present an approach based on equivariant diffusion that facilitates gripper-agnostic encoding of scenes containing graspable objects and gripper-aware decoding of grasp poses by integrating gripper geometry into the model. We also develop a dataset generation framework that produces cluttered scenes with variable-sized object heaps, improving the training of grasp synthesis methods. Experimental evaluation on diverse object datasets demonstrates the generalizability of our approach across gripper architectures, ranging from simple parallel-jaw grippers to humanoid hands, outperforming both single-gripper and multi-gripper state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge