Differentially Evolving Memory Ensembles: Pareto Optimization based on Computational Intelligence for Embedded Memories on a System Level

Paper and Code

Sep 20, 2021

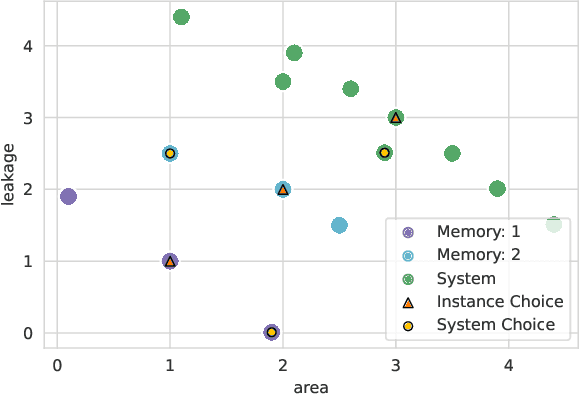

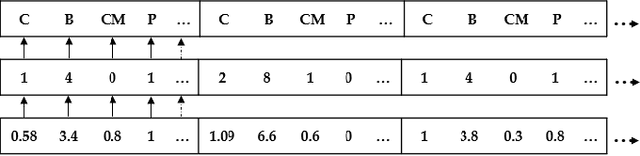

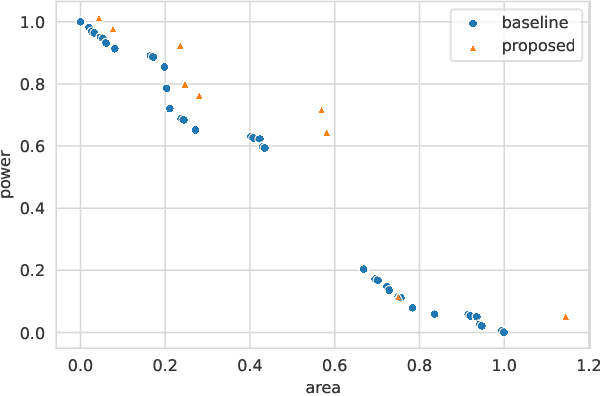

As the relative power, performance, and area (PPA) impact of embedded memories continues to grow, proper parameterization of each of the thousands of memories on a chip is essential. When the parameters of all memories of a product are optimized together as part of a single system, better trade-offs may be achieved than if the same memories were optimized in isolation. However, challenges such as a sparse solution space, conflicting objectives, and computationally expensive PPA estimation impede the application of common optimization heuristics. We show how the memory system optimization problem can be solved through computational intelligence. We apply a Pareto-based Differential Evolution to ensure unbiased optimization of multiple PPA objectives. To ensure efficient exploration of a sparse solution space, we repair individuals to yield feasible parameterizations. PPA is estimated efficiently in large batches by pre-trained regression neural networks. Our framework enables the system optimization of thousands of memories while keeping a small resource footprint. Evaluating our method on a tractable system, we find that our method finds diverse solutions which exhibit less than 0.5% distance from known global optima.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge