Deontological Ethics By Monotonicity Shape Constraints

Paper and Code

Jan 31, 2020

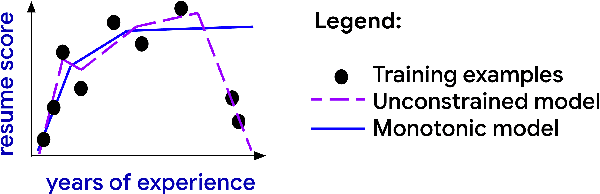

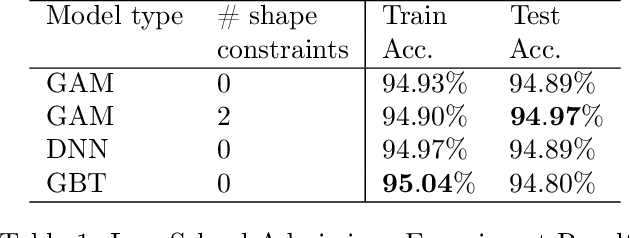

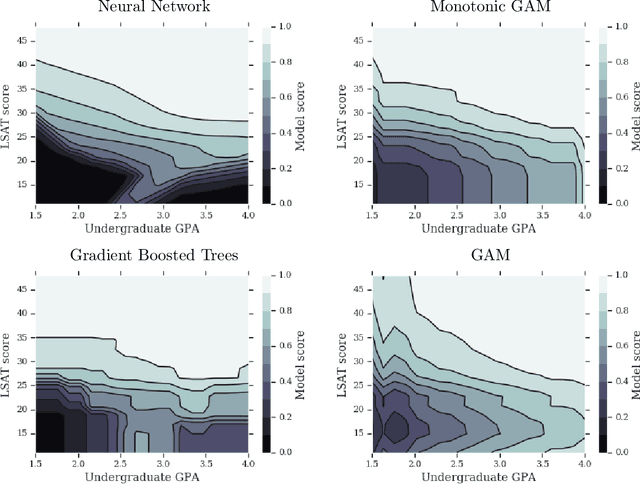

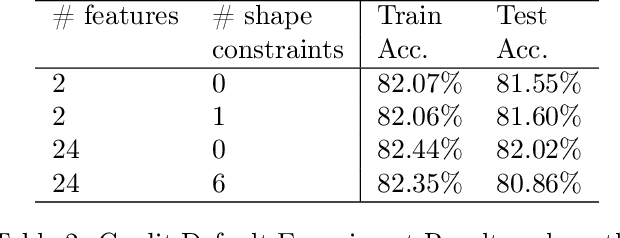

We demonstrate how easy it is for modern machine-learned systems to violate common deontological ethical principles and social norms such as "favor the less fortunate", and "do not penalize good attributes." We propose that in some cases such ethical principles can be incorporated into a machine-learned model by adding shape constraints that constrain the model to respond only positively to relevant inputs. We analyze the relationship between these deontological constraints that act on individuals and the consequentialist group-based fairness goals of one-sided statistical parity and equal opportunity. This strategy works with sensitive attributes that are Boolean or real-valued such as income and age, and can help produce more responsible and trustworthy AI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge