Demystifying the Effects of Non-Independence in Federated Learning

Paper and Code

Mar 20, 2021

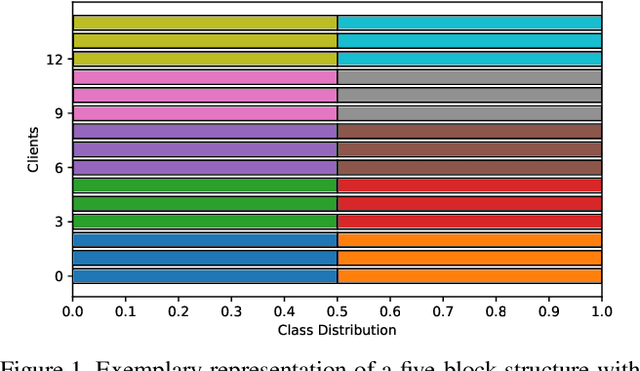

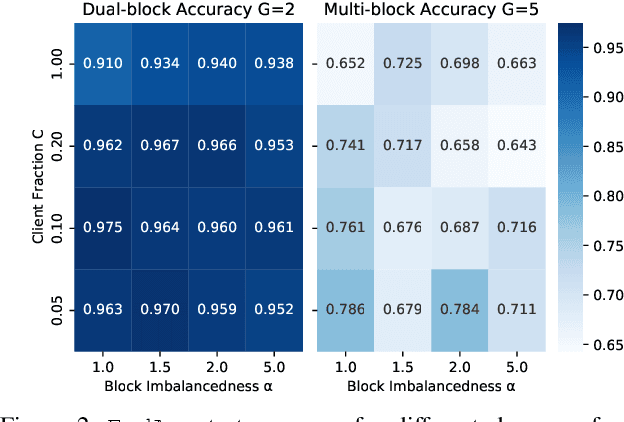

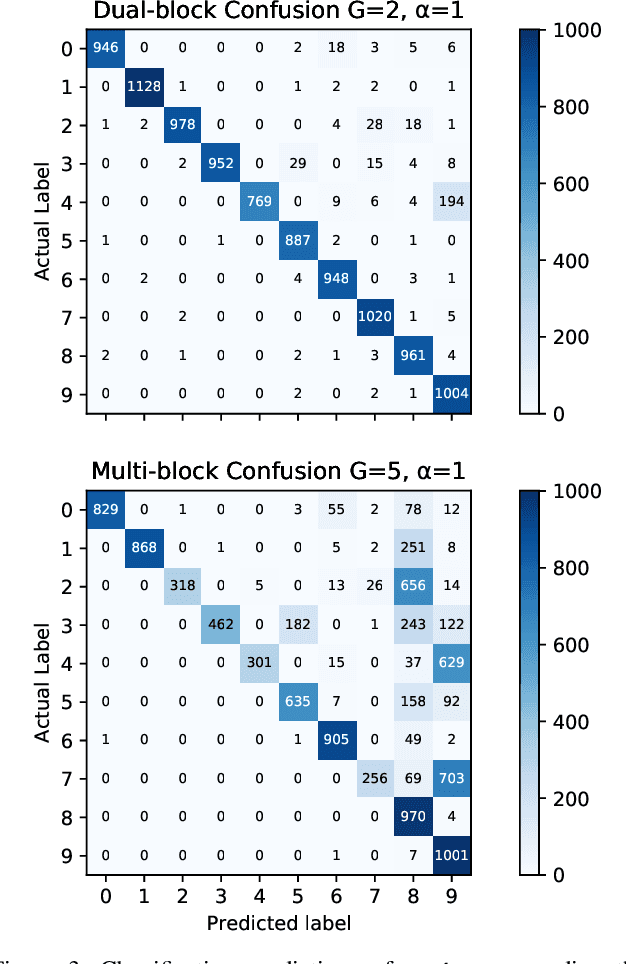

Federated Learning (FL) enables statistical models to be built on user-generated data without compromising data security and user privacy. For this reason, FL is well suited for on-device learning from mobile devices where data is abundant and highly privatized. Constrained by the temporal availability of mobile devices, only a subset of devices is accessible to participate in the iterative protocol consisting of training and aggregation. In this study, we take a step toward better understanding the effect of non-independent data distributions arising from block-cyclic sampling. By conducting extensive experiments on visual classification, we measure the effects of block-cyclic sampling (both standalone and in combination with non-balanced block distributions). Specifically, we measure the alterations induced by block-cyclic sampling from the perspective of accuracy, fairness, and convergence rate. Experimental results indicate robustness to cycling over a two-block structure, e.g., due to time zones. In contrast, drawing data samples dependently from a multi-block structure significantly degrades the performance and rate of convergence by up to 26%. Moreover, we find that this performance degeneration is further aggravated by unbalanced block distributions to a point that can no longer be adequately compensated by higher communication and more frequent synchronization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge