DeFL: Decentralized Weight Aggregation for Cross-silo Federated Learning

Paper and Code

Aug 01, 2022

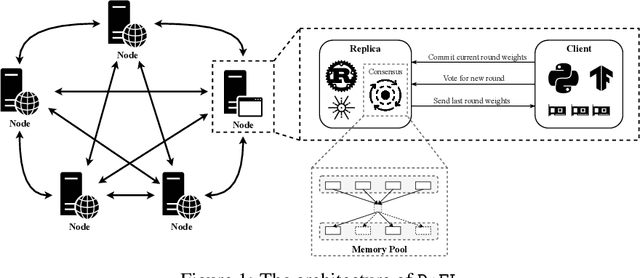

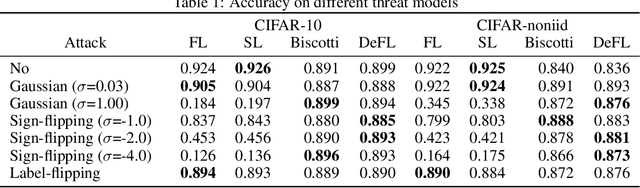

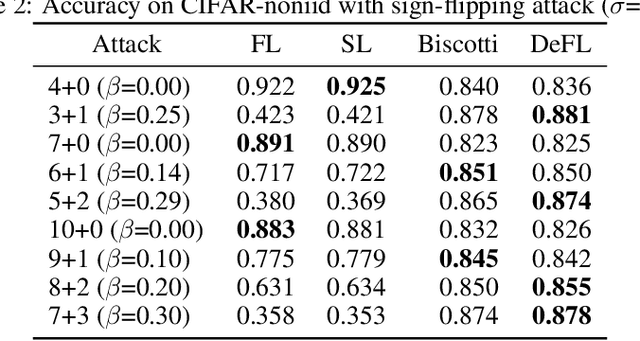

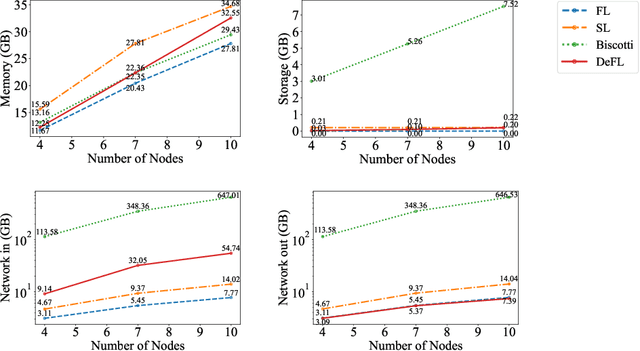

Federated learning (FL) is an emerging promising paradigm of privacy-preserving machine learning (ML). An important type of FL is cross-silo FL, which enables a small scale of organizations to cooperatively train a shared model by keeping confidential data locally and aggregating weights on a central parameter server. However, the central server may be vulnerable to malicious attacks or software failures in practice. To address this issue, in this paper, we propose DeFL, a novel decentralized weight aggregation framework for cross-silo FL. DeFL eliminates the central server by aggregating weights on each participating node and weights of only the current training round are maintained and synchronized among all nodes. We use Multi-Krum to enable aggregating correct weights from honest nodes and use HotStuff to ensure the consistency of the training round number and weights among all nodes. Besides, we theoretically analyze the Byzantine fault tolerance, convergence, and complexity of DeFL. We conduct extensive experiments over two widely-adopted public datasets, i.e. CIFAR-10 and Sentiment140, to evaluate the performance of DeFL. Results show that DeFL defends against common threat models with minimal accuracy loss, and achieves up to 100x reduction in storage overhead and up to 12x reduction in network overhead, compared to state-of-the-art decentralized FL approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge