DeepEdge: A Deep Reinforcement Learning based Task Orchestrator for Edge Computing

Paper and Code

Oct 05, 2021

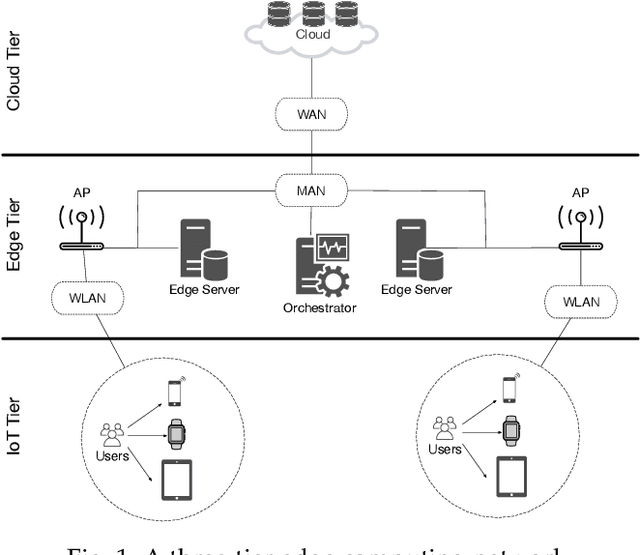

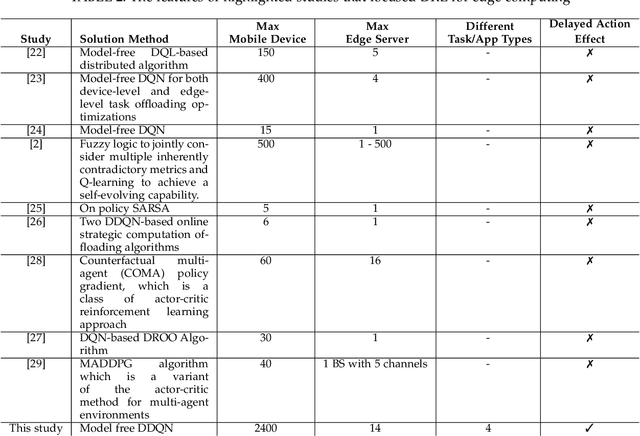

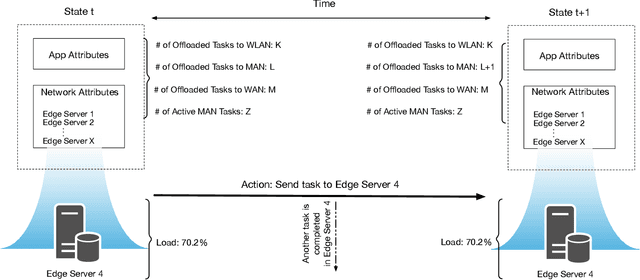

The improvements in the edge computing technology pave the road for diversified applications that demand real-time interaction. However, due to the mobility of the end-users and the dynamic edge environment, it becomes challenging to handle the task offloading with high performance. Moreover, since each application in mobile devices has different characteristics, a task orchestrator must be adaptive and have the ability to learn the dynamics of the environment. For this purpose, we develop a deep reinforcement learning based task orchestrator, DeepEdge, which learns to meet different task requirements without needing human interaction even under the heavily-loaded stochastic network conditions in terms of mobile users and applications. Given the dynamic offloading requests and time-varying communication conditions, we successfully model the problem as a Markov process and then apply the Double Deep Q-Network (DDQN) algorithm to implement DeepEdge. To evaluate the robustness of DeepEdge, we experiment with four different applications including image rendering, infotainment, pervasive health, and augmented reality in the network under various loads. Furthermore, we compare the performance of our agent with the four different task offloading approaches in the literature. Our results show that DeepEdge outperforms its competitors in terms of the percentage of satisfactorily completed tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge