Deep Learning Models for Automatic Summarization

Paper and Code

May 25, 2020

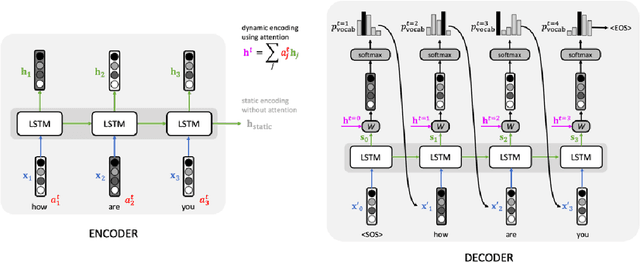

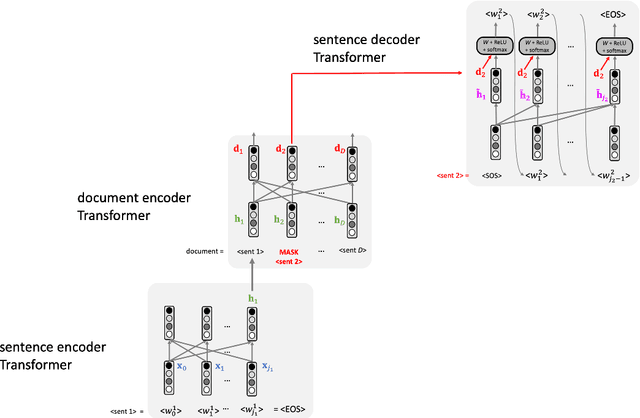

Text summarization is an NLP task which aims to convert a textual document into a shorter one while keeping as much meaning as possible. This pedagogical article reviews a number of recent Deep Learning architectures that have helped to advance research in this field. We will discuss in particular applications of pointer networks, hierarchical Transformers and Reinforcement Learning. We assume basic knowledge of Seq2Seq architecture and Transformer networks within NLP.

* 13 pages, 5 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge