Deep Graph Attention Networks

Paper and Code

Oct 21, 2024

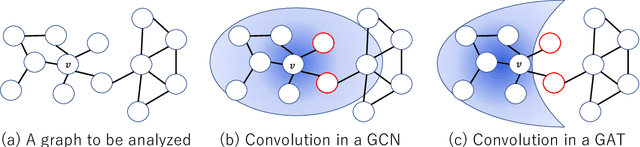

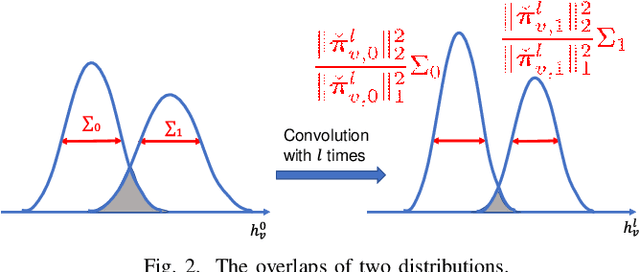

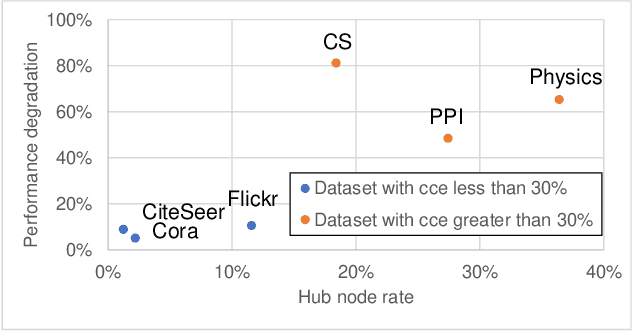

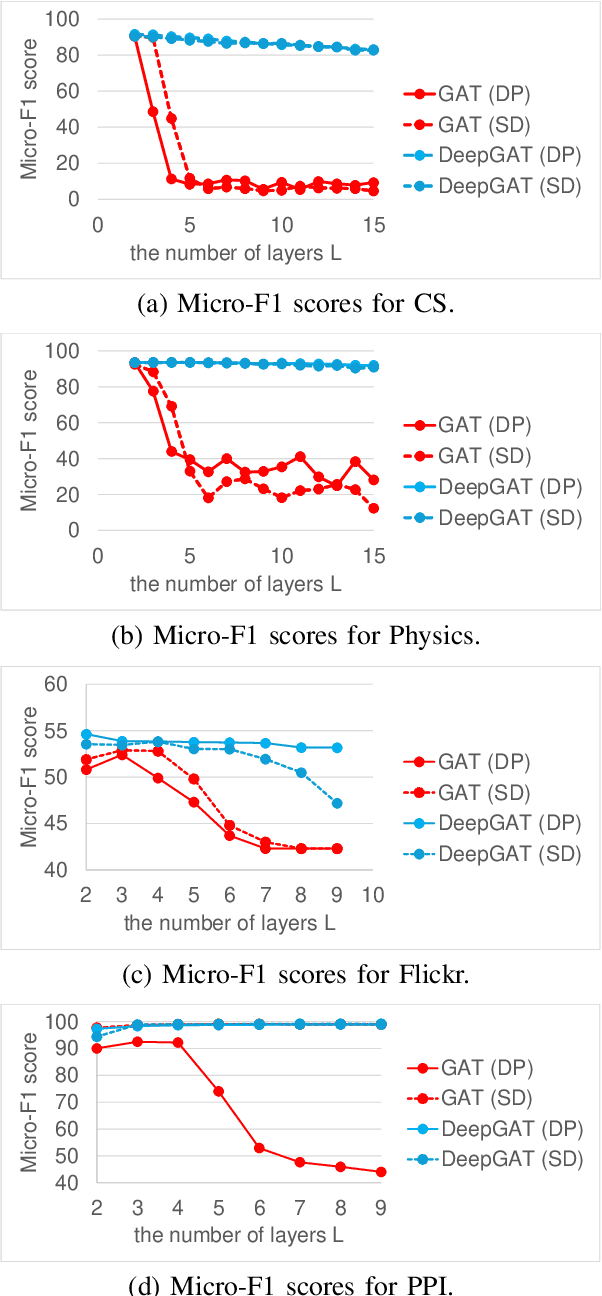

Graphs are useful for representing various realworld objects. However, graph neural networks (GNNs) tend to suffer from over-smoothing, where the representations of nodes of different classes become similar as the number of layers increases, leading to performance degradation. A method that does not require protracted tuning of the number of layers is needed to effectively construct a graph attention network (GAT), a type of GNN. Therefore, we introduce a method called "DeepGAT" for predicting the class to which nodes belong in a deep GAT. It avoids over-smoothing in a GAT by ensuring that nodes in different classes are not similar at each layer. Using DeepGAT to predict class labels, a 15-layer network is constructed without the need to tune the number of layers. DeepGAT prevented over-smoothing and achieved a 15-layer GAT with similar performance to a 2-layer GAT, as indicated by the similar attention coefficients. DeepGAT enables the training of a large network to acquire similar attention coefficients to a network with few layers. It avoids the over-smoothing problem and obviates the need to tune the number of layers, thus saving time and enhancing GNN performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge