Deep Gaussian networks for function approximation on data defined manifolds

Paper and Code

Aug 01, 2019

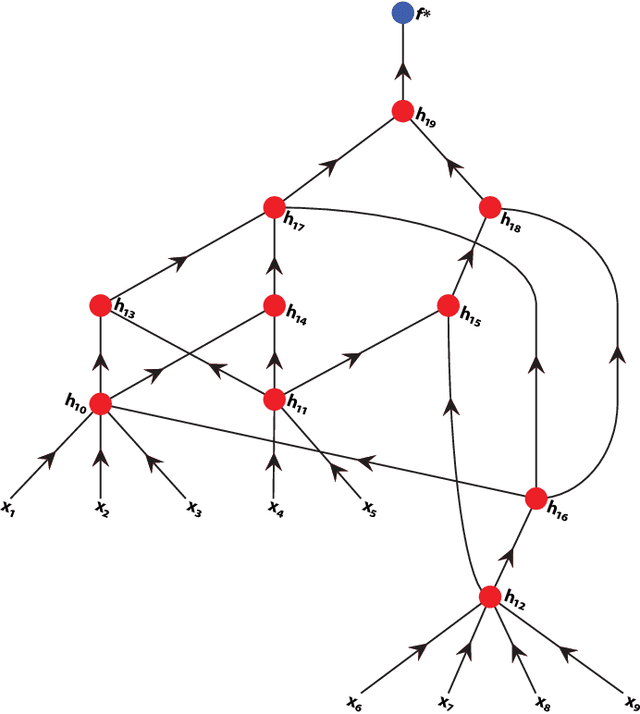

In much of the literature on function approximation by deep networks, the function is assumed to be defined on some known domain, such as a cube or sphere. In practice, the data might not be dense on these domains, and therefore, the approximation theory results are observed to be too conservative. In manifold learning, one assumes instead that the data is sampled from an unknown manifold; i.e., the manifold is defined by the data itself. Function approximation on this unknown manifold is then a two stage procedure: first, one approximates the Laplace-Beltrami operator (and its eigen-decomposition) on this manifold using a graph Laplacian, and next, approximates the target function using the eigen-functions. In this paper, we propose a more direct approach to function approximation on unknown, data defined manifolds without computing the eigen-decomposition of some operator, and estimate the degree of approximation in terms of the manifold dimension. This leads to similar results in function approximation using deep networks where each channel evaluates a Gaussian network on a possibly unknown manifold.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge