Cross-Architectural Positive Pairs improve the effectiveness of Self-Supervised Learning

Paper and Code

Jan 27, 2023

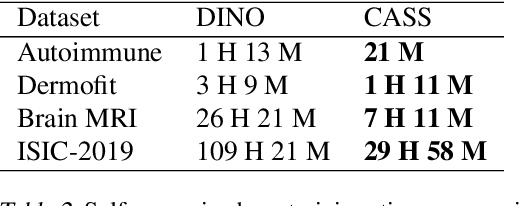

Existing self-supervised techniques have extreme computational requirements and suffer a substantial drop in performance with a reduction in batch size or pretraining epochs. This paper presents Cross Architectural - Self Supervision (CASS), a novel self-supervised learning approach that leverages Transformer and CNN simultaneously. Compared to the existing state-of-the-art self-supervised learning approaches, we empirically show that CASS-trained CNNs and Transformers across four diverse datasets gained an average of 3.8% with 1% labeled data, 5.9% with 10% labeled data, and 10.13% with 100% labeled data while taking 69% less time. We also show that CASS is much more robust to changes in batch size and training epochs than existing state-of-the-art self-supervised learning approaches. We have open-sourced our code at https://github.com/pranavsinghps1/CASS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge