Counting and Segmenting Sorghum Heads

Paper and Code

May 30, 2019

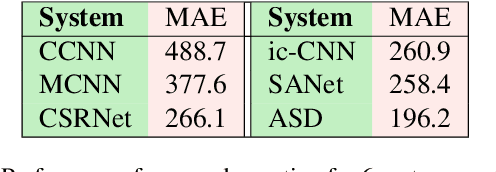

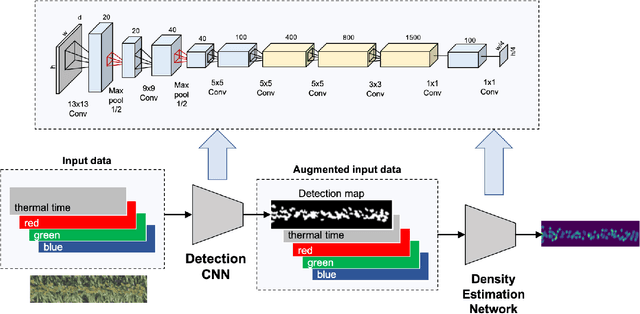

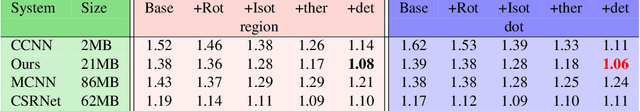

Phenotyping is the process of measuring an organism's observable traits. Manual phenotyping of crops is a labor-intensive, time-consuming, costly, and error prone process. Accurate, automated, high-throughput phenotyping can relieve a huge burden in the crop breeding pipeline. In this paper, we propose a scalable, high-throughput approach to automatically count and segment panicles (heads), a key phenotype, from aerial sorghum crop imagery. Our counting approach uses the image density map obtained from dot or region annotation as the target with a novel deep convolutional neural network architecture. We also propose a novel instance segmentation algorithm using the estimated density map, to identify the individual panicles in the presence of occlusion. With real Sorghum aerial images, we obtain a mean absolute error (MAE) of 1.06 for counting which is better than using well-known crowd counting approaches such as CCNN, MCNN and CSRNet models. The instance segmentation model also produces respectable results which will be ultimately useful in reducing the manual annotation workload for future data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge