Core Sampling Framework for Pixel Classification

Paper and Code

Dec 06, 2016

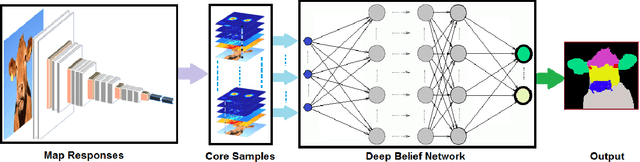

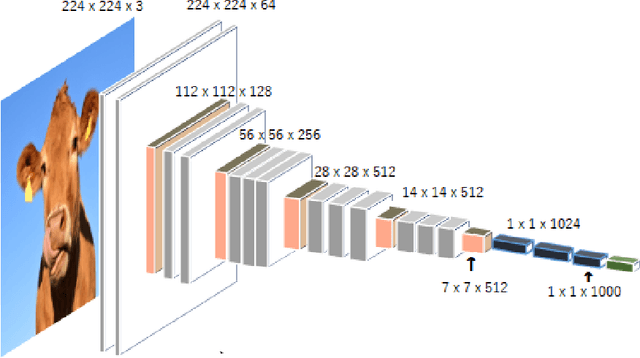

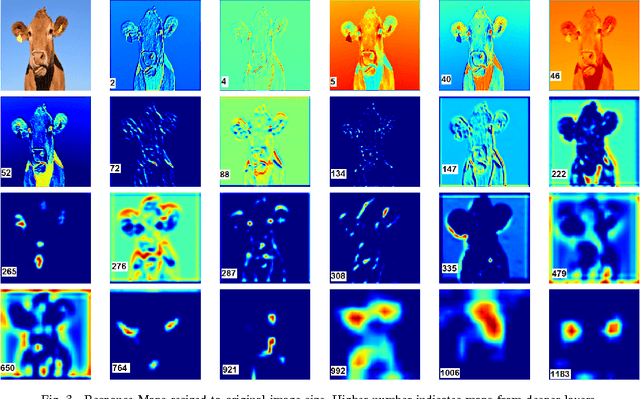

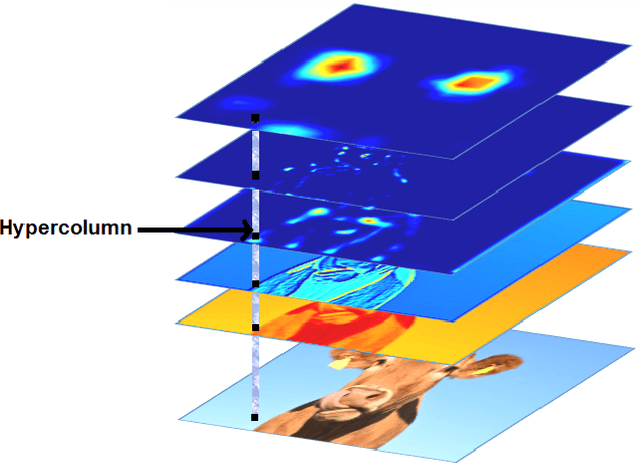

The intermediate map responses of a Convolutional Neural Network (CNN) contain information about an image that can be used to extract contextual knowledge about it. In this paper, we present a core sampling framework that is able to use these activation maps from several layers as features to another neural network using transfer learning to provide an understanding of an input image. Our framework creates a representation that combines features from the test data and the contextual knowledge gained from the responses of a pretrained network, processes it and feeds it to a separate Deep Belief Network. We use this representation to extract more information from an image at the pixel level, hence gaining understanding of the whole image. We experimentally demonstrate the usefulness of our framework using a pretrained VGG-16 model to perform segmentation on the BAERI dataset of Synthetic Aperture Radar(SAR) imagery and the CAMVID dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge