Contextual Lensing of Universal Sentence Representations

Paper and Code

Feb 20, 2020

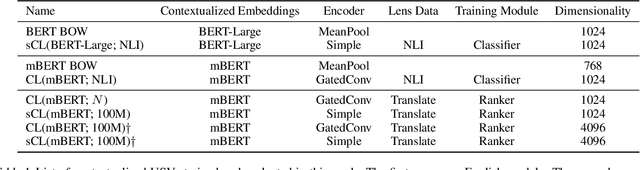

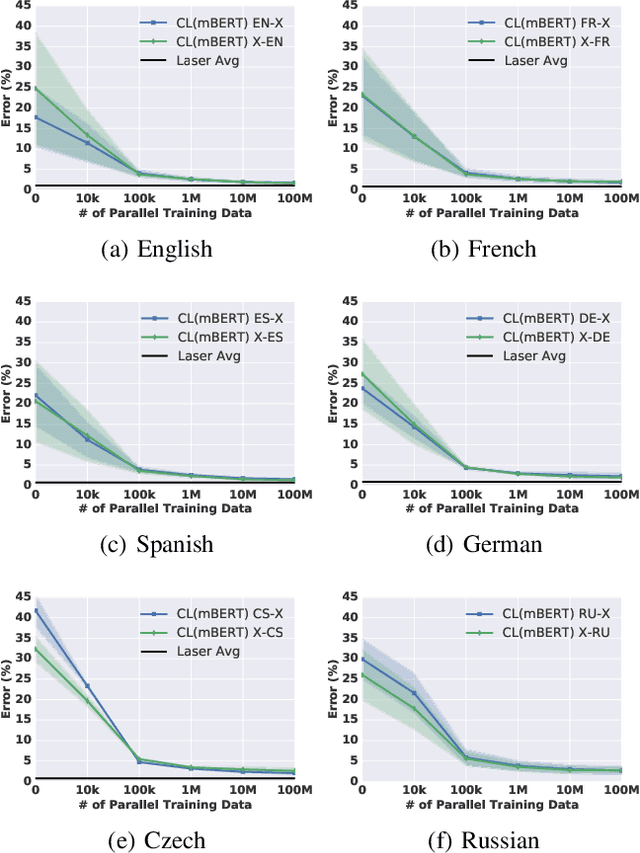

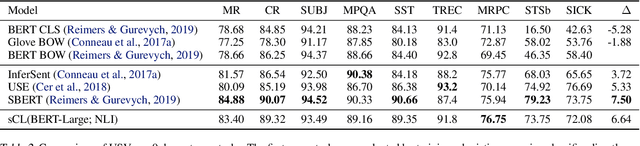

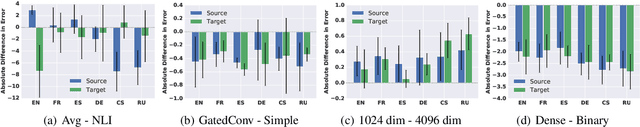

What makes a universal sentence encoder universal? The notion of a generic encoder of text appears to be at odds with the inherent contextualization and non-permanence of language use in a dynamic world. However, mapping sentences into generic fixed-length vectors for downstream similarity and retrieval tasks has been fruitful, particularly for multilingual applications. How do we manage this dilemma? In this work we propose Contextual Lensing, a methodology for inducing context-oriented universal sentence vectors. We break the construction of universal sentence vectors into a core, variable length, sentence matrix representation equipped with an adaptable `lens' from which fixed-length vectors can be induced as a function of the lens context. We show that it is possible to focus notions of language similarity into a small number of lens parameters given a core universal matrix representation. For example, we demonstrate the ability to encode translation similarity of sentences across several languages into a single weight matrix, even when the core encoder has not seen parallel data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge