Construction of Latent Descriptor Space and Inference Model of Hand-Object Interactions

Paper and Code

Sep 12, 2017

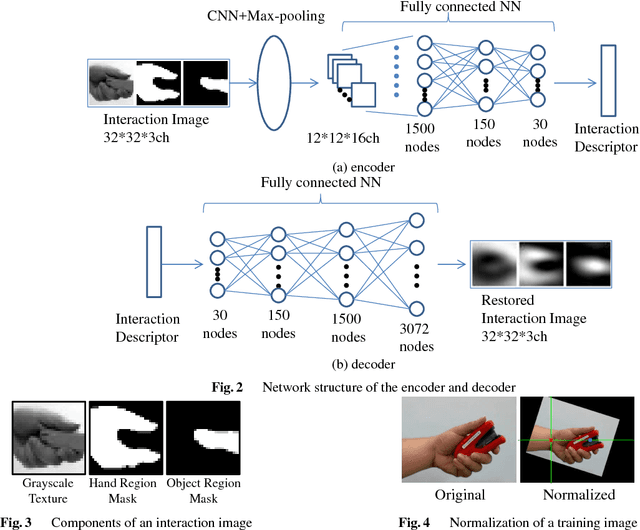

Appearance-based generic object recognition is a challenging problem because all possible appearances of objects cannot be registered, especially as new objects are produced every day. Function of objects, however, has a comparatively small number of prototypes. Therefore, function-based classification of new objects could be a valuable tool for generic object recognition. Object functions are closely related to hand-object interactions during handling of a functional object; i.e., how the hand approaches the object, which parts of the object and contact the hand, and the shape of the hand during interaction. Hand-object interactions are helpful for modeling object functions. However, it is difficult to assign discrete labels to interactions because an object shape and grasping hand-postures intrinsically have continuous variations. To describe these interactions, we propose the interaction descriptor space which is acquired from unlabeled appearances of human hand-object interactions. By using interaction descriptors, we can numerically describe the relation between an object's appearance and its possible interaction with the hand. The model infers the quantitative state of the interaction from the object image alone. It also identifies the parts of objects designed for hand interactions such as grips and handles. We demonstrate that the proposed method can unsupervisedly generate interaction descriptors that make clusters corresponding to interaction types. And also we demonstrate that the model can infer possible hand-object interactions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge