Comparison of Generative Learning Methods for Turbulence Modeling

Paper and Code

Nov 25, 2024

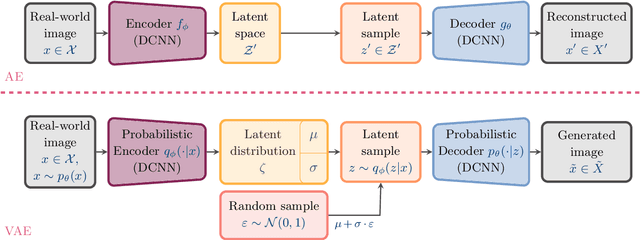

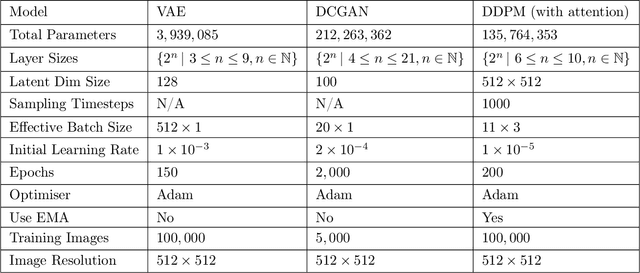

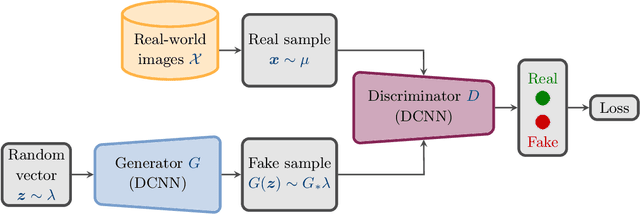

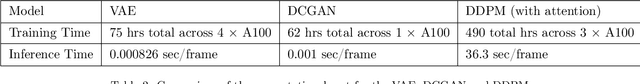

Numerical simulations of turbulent flows present significant challenges in fluid dynamics due to their complexity and high computational cost. High resolution techniques such as Direct Numerical Simulation (DNS) and Large Eddy Simulation (LES) are generally not computationally affordable, particularly for technologically relevant problems. Recent advances in machine learning, specifically in generative probabilistic models, offer promising alternatives for turbulence modeling. This paper investigates the application of three generative models - Variational Autoencoders (VAE), Deep Convolutional Generative Adversarial Networks (DCGAN), and Denoising Diffusion Probabilistic Models (DDPM) - in simulating a 2D K\'arm\'an vortex street around a fixed cylinder. Training data was obtained by means of LES. We evaluate each model's ability to capture the statistical properties and spatial structures of the turbulent flow. Our results demonstrate that DDPM and DCGAN effectively replicate the flow distribution, highlighting their potential as efficient and accurate tools for turbulence modeling. We find a strong argument for DCGAN, as although they are more difficult to train (due to problems such as mode collapse), they gave the fastest inference and training time, require less data to train compared to VAE and DDPM, and provide the results most closely aligned with the input stream. In contrast, VAE train quickly (and can generate samples quickly) but do not produce adequate results, and DDPM, whilst effective, is significantly slower at both inference and training time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge