Communication-Based Decomposition Mechanisms for Decentralized MDPs

Paper and Code

Oct 31, 2011

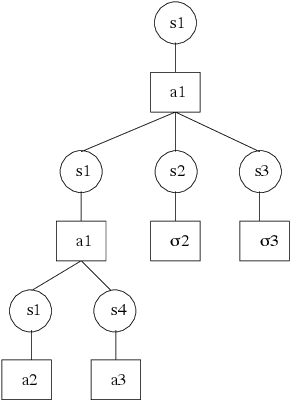

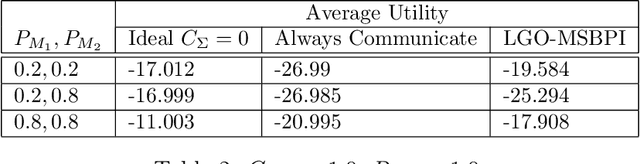

Multi-agent planning in stochastic environments can be framed formally as a decentralized Markov decision problem. Many real-life distributed problems that arise in manufacturing, multi-robot coordination and information gathering scenarios can be formalized using this framework. However, finding the optimal solution in the general case is hard, limiting the applicability of recently developed algorithms. This paper provides a practical approach for solving decentralized control problems when communication among the decision makers is possible, but costly. We develop the notion of communication-based mechanism that allows us to decompose a decentralized MDP into multiple single-agent problems. In this framework, referred to as decentralized semi-Markov decision process with direct communication (Dec-SMDP-Com), agents operate separately between communications. We show that finding an optimal mechanism is equivalent to solving optimally a Dec-SMDP-Com. We also provide a heuristic search algorithm that converges on the optimal decomposition. Restricting the decomposition to some specific types of local behaviors reduces significantly the complexity of planning. In particular, we present a polynomial-time algorithm for the case in which individual agents perform goal-oriented behaviors between communications. The paper concludes with an additional tractable algorithm that enables the introduction of human knowledge, thereby reducing the overall problem to finding the best time to communicate. Empirical results show that these approaches provide good approximate solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge