Combining Contrastive Learning and Knowledge Graph Embeddings to develop medical word embeddings for the Italian language

Paper and Code

Nov 09, 2022

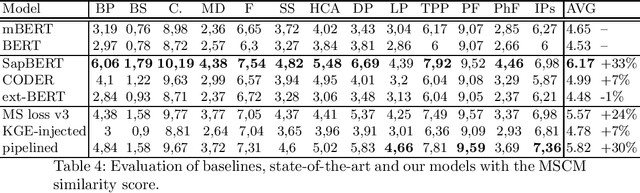

Word embeddings play a significant role in today's Natural Language Processing tasks and applications. While pre-trained models may be directly employed and integrated into existing pipelines, they are often fine-tuned to better fit with specific languages or domains. In this paper, we attempt to improve available embeddings in the uncovered niche of the Italian medical domain through the combination of Contrastive Learning (CL) and Knowledge Graph Embedding (KGE). The main objective is to improve the accuracy of semantic similarity between medical terms, which is also used as an evaluation task. Since the Italian language lacks medical texts and controlled vocabularies, we have developed a specific solution by combining preexisting CL methods (multi-similarity loss, contextualization, dynamic sampling) and the integration of KGEs, creating a new variant of the loss. Although without having outperformed the state-of-the-art, represented by multilingual models, the obtained results are encouraging, providing a significant leap in performance compared to the starting model, while using a significantly lower amount of data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge