Classifying States of the Hopfield Network with Improved Accuracy, Generalization, and Interpretability

Paper and Code

Mar 04, 2025

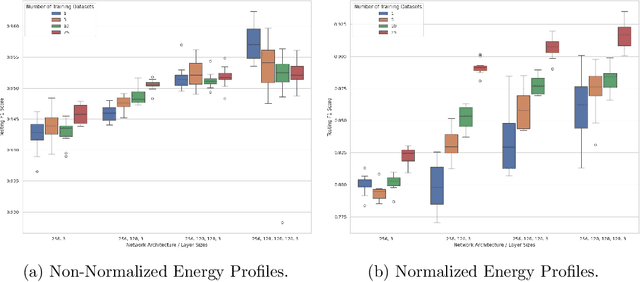

We extend the existing work on Hopfield network state classification, employing more complex models that remain interpretable, such as densely-connected feed-forward deep neural networks and support vector machines. The states of the Hopfield network can be grouped into several classes, including learned (those presented during training), spurious (stable states that were not learned), and prototype (stable states that were not learned but are representative for a subset of learned states). It is often useful to determine to what class a given state belongs to; for example to ignore spurious states when retrieving from the network. Previous research has approached the state classification task with simple linear methods, most notably the stability ratio. We deepen the research on classifying states from prototype-regime Hopfield networks, investigating how varying the factors strengthening prototypes influences the state classification task. We study the generalizability of different classification models when trained on states derived from different prototype tasks -- for example, can a network trained on a Hopfield network with 10 prototypes classify states from a network with 20 prototypes? We find that simple models often outperform the stability ratio while remaining interpretable. These models require surprisingly little training data and generalize exceptionally well to states generated by a range of Hopfield networks, even those that were trained on exceedingly different datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge