Can Stochastic Gradient Langevin Dynamics Provide Differential Privacy for Deep Learning?

Paper and Code

Oct 12, 2021

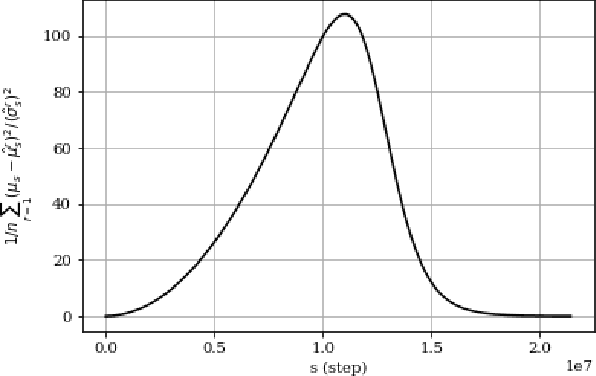

Bayesian learning via Stochastic Gradient Langevin Dynamics (SGLD) has been suggested for differentially private learning. While previous research provides differential privacy bounds for SGLD when close to convergence or at the initial steps of the algorithm, the question of what differential privacy guarantees can be made in between remains unanswered. This interim region is essential, especially for Bayesian neural networks, as it is hard to guarantee convergence to the posterior. This paper will show that using SGLD might result in unbounded privacy loss for this interim region, even when sampling from the posterior is as differentially private as desired.

View paper on

OpenReview

OpenReview

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge