Breaking the Softmax Bottleneck for Sequential Recommender Systems with Dropout and Decoupling

Paper and Code

Oct 11, 2021

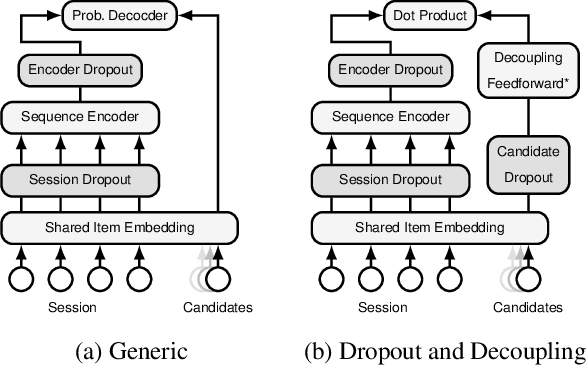

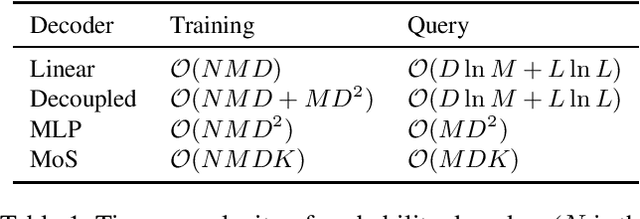

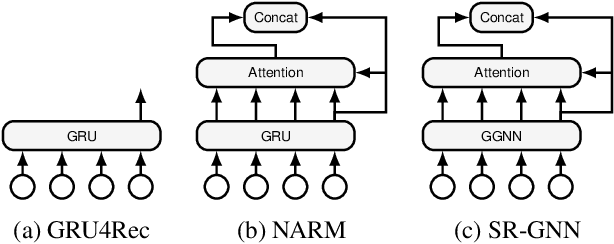

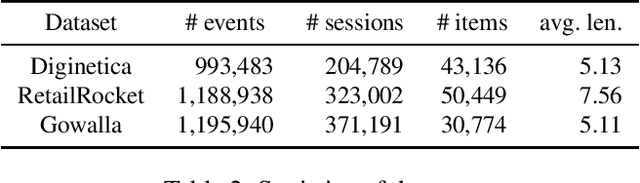

The Softmax bottleneck was first identified in language modeling as a theoretical limit on the expressivity of Softmax-based models. Being one of the most widely-used methods to output probability, Softmax-based models have found a wide range of applications, including session-based recommender systems (SBRSs). Softmax-based models consist of a Softmax function on top of a final linear layer. The bottleneck has been shown to be caused by rank deficiency in the final linear layer due to its connection with matrix factorization. In this paper, we show that there are more aspects to the Softmax bottleneck in SBRSs. Contrary to common beliefs, overfitting does happen in the final linear layer, while it is often associated with complex networks. Furthermore, we identified that the common technique of sharing item embeddings among session sequences and the candidate pool creates a tight-coupling that also contributes to the bottleneck. We propose a simple yet effective method, Dropout and Decoupling (D&D), to alleviate these problems. Our experiments show that our method significantly improves the accuracy of a variety of Softmax-based SBRS algorithms. When compared to other computationally expensive methods, such as MLP and MoS (Mixture of Softmaxes), our method performs on par with and at times even better than those methods, while keeping the same time complexity as Softmax-based models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge