Bio-inspired visual attention for silicon retinas based on spiking neural networks applied to pattern classification

Paper and Code

May 31, 2021

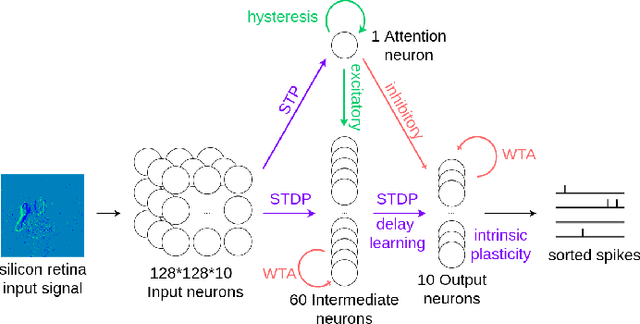

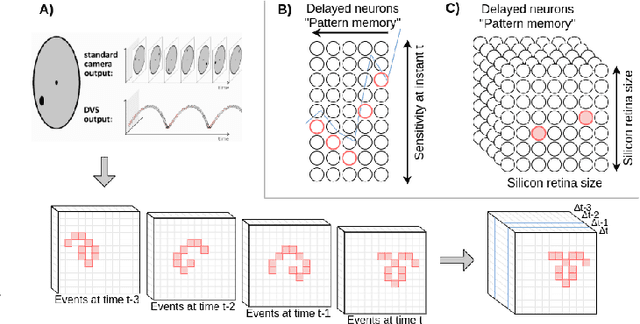

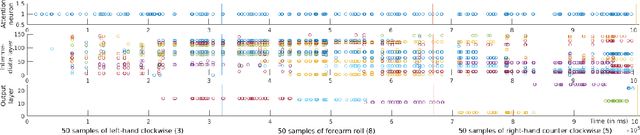

Visual attention can be defined as the behavioral and cognitive process of selectively focusing on a discrete aspect of sensory cues while disregarding other perceivable information. This biological mechanism, more specifically saliency detection, has long been used in multimedia indexing to drive the analysis only on relevant parts of images or videos for further processing. The recent advent of silicon retinas (or event cameras -- sensors that measure pixel-wise changes in brightness and output asynchronous events accordingly) raises the question of how to adapt attention and saliency to the unconventional type of such sensors' output. Silicon retina aims to reproduce the biological retina behaviour. In that respect, they produce punctual events in time that can be construed as neural spikes and interpreted as such by a neural network. In particular, Spiking Neural Networks (SNNs) represent an asynchronous type of artificial neural network closer to biology than traditional artificial networks, mainly because they seek to mimic the dynamics of neural membrane and action potentials over time. SNNs receive and process information in the form of spike trains. Therefore, they make for a suitable candidate for the efficient processing and classification of incoming event patterns measured by silicon retinas. In this paper, we review the biological background behind the attentional mechanism, and introduce a case study of event videos classification with SNNs, using a biology-grounded low-level computational attention mechanism, with interesting preliminary results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge