Bias in ontologies -- a preliminary assessment

Paper and Code

Jan 20, 2021

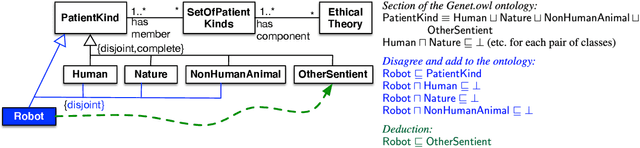

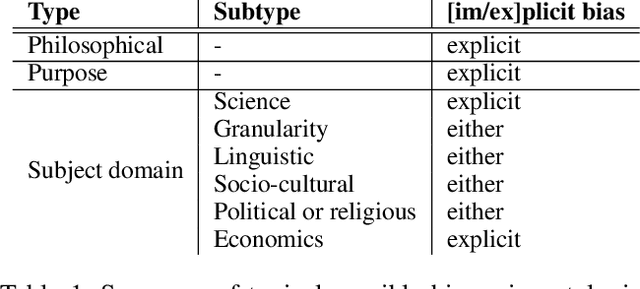

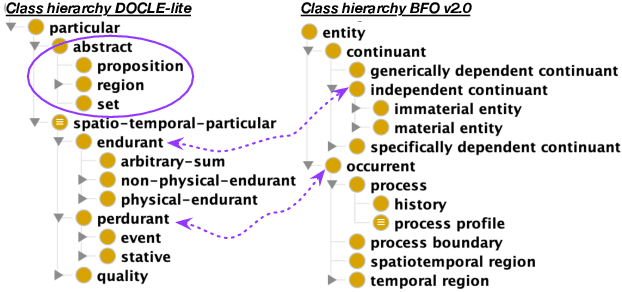

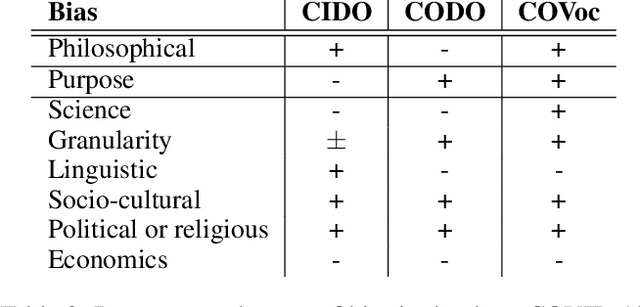

Logical theories in the form of ontologies and similar artefacts in computing and IT are used for structuring, annotating, and querying data, among others, and therewith influence data analytics regarding what is fed into the algorithms. Algorithmic bias is a well-known notion, but what does bias mean in the context of ontologies that provide a structuring mechanism for an algorithm's input? What are the sources of bias there and how would they manifest themselves in ontologies? We examine and enumerate types of bias relevant for ontologies, and whether they are explicit or implicit. These eight types are illustrated with examples from extant production-level ontologies and samples from the literature. We then assessed three concurrently developed COVID-19 ontologies on bias and detected different subsets of types of bias in each one, to a greater or lesser extent. This first characterisation aims contribute to a sensitisation of ethical aspects of ontologies primarily regarding representation of information and knowledge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge