Bias and Fairness in Computer Vision Applications of the Criminal Justice System

Paper and Code

Aug 05, 2022

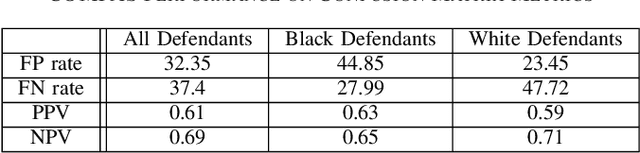

Discriminatory practices involving AI-driven police work have been the subject of much controversies in the past few years, with algorithms such as COMPAS, PredPol and ShotSpotter being accused of unfairly impacting minority groups. At the same time, the issues of fairness in machine learning, and in particular in computer vision, have been the subject of a growing number of academic works. In this paper, we examine how these area intersect. We provide information on how these practices have come to exist and the difficulties in alleviating them. We then examine three applications currently in development to understand what risks they pose to fairness and how those risks can be mitigated.

* \c{opyright} 2021 IEEE. Personal use of this material is permitted.

Permission from IEEE must be obtained for all other uses, in any current or

future media, including reprinting/republishing this material for advertising

or promotional purposes, creating new collective works, for resale or

redistribution to servers or lists, or reuse of any copyrighted component of

this work in other works

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge