Avoiding Generative Model Writer's Block With Embedding Nudging

Paper and Code

Aug 28, 2024

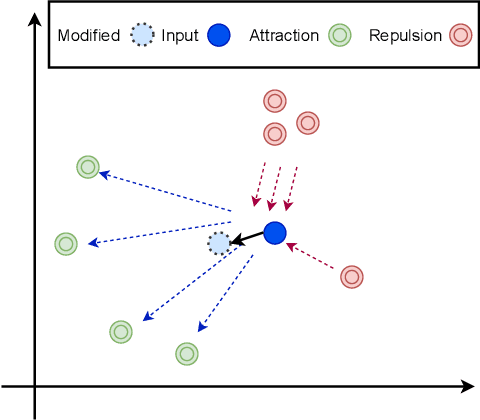

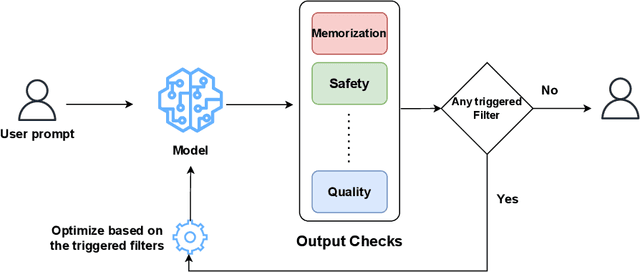

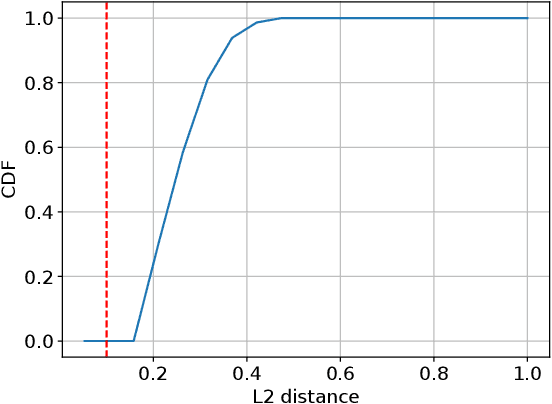

Generative image models, since introduction, have become a global phenomenon. From new arts becoming possible to new vectors of abuse, many new capabilities have become available. One of the challenging issues with generative models is controlling the generation process specially to prevent specific generations classes or instances . There are several reasons why one may want to control the output of generative models, ranging from privacy and safety concerns to application limitations or user preferences To address memorization and privacy challenges, there has been considerable research dedicated to filtering prompts or filtering the outputs of these models. What all these solutions have in common is that at the end of the day they stop the model from producing anything, hence limiting the usability of the model. In this paper, we propose a method for addressing this usability issue by making it possible to steer away from unwanted concepts (when detected in model's output) and still generating outputs. In particular we focus on the latent diffusion image generative models and how one can prevent them to generate particular images while generating similar images with limited overhead. We focus on mitigating issues like image memorization, demonstrating our technique's effectiveness through qualitative and quantitative evaluations. Our method successfully prevents the generation of memorized training images while maintaining comparable image quality and relevance to the unmodified model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge